The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

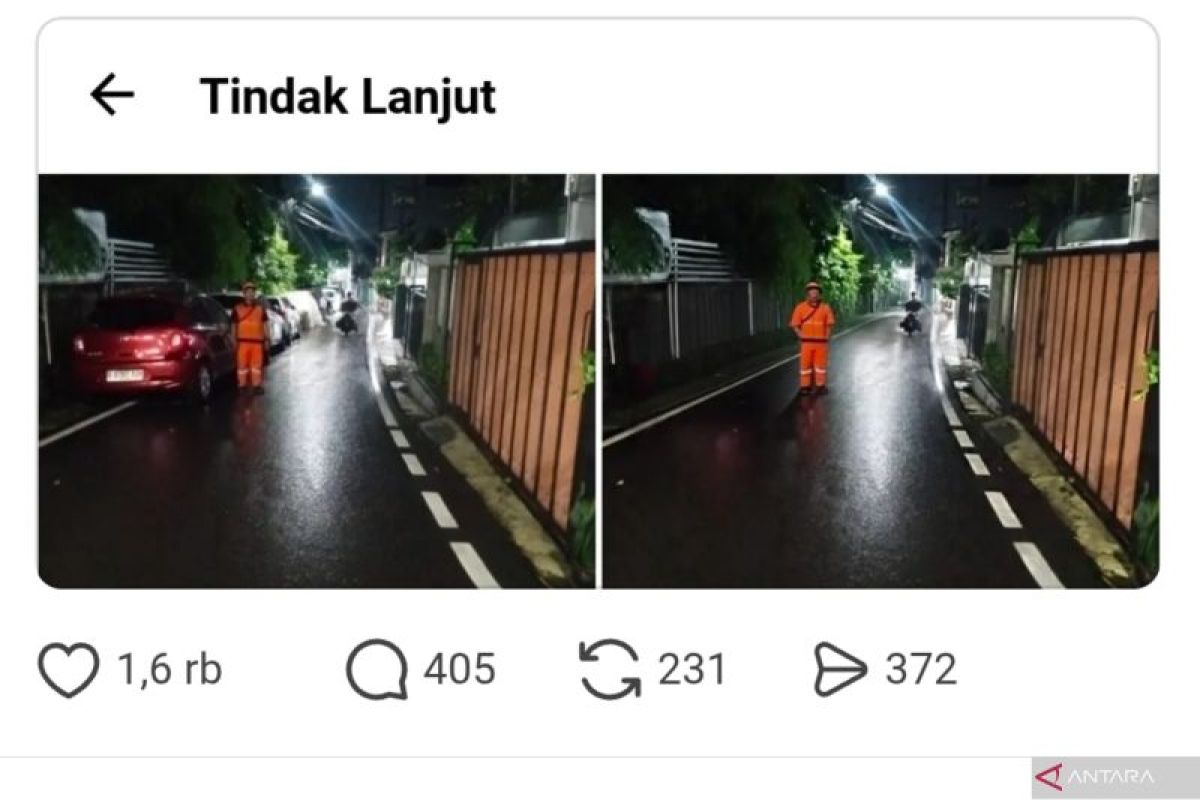

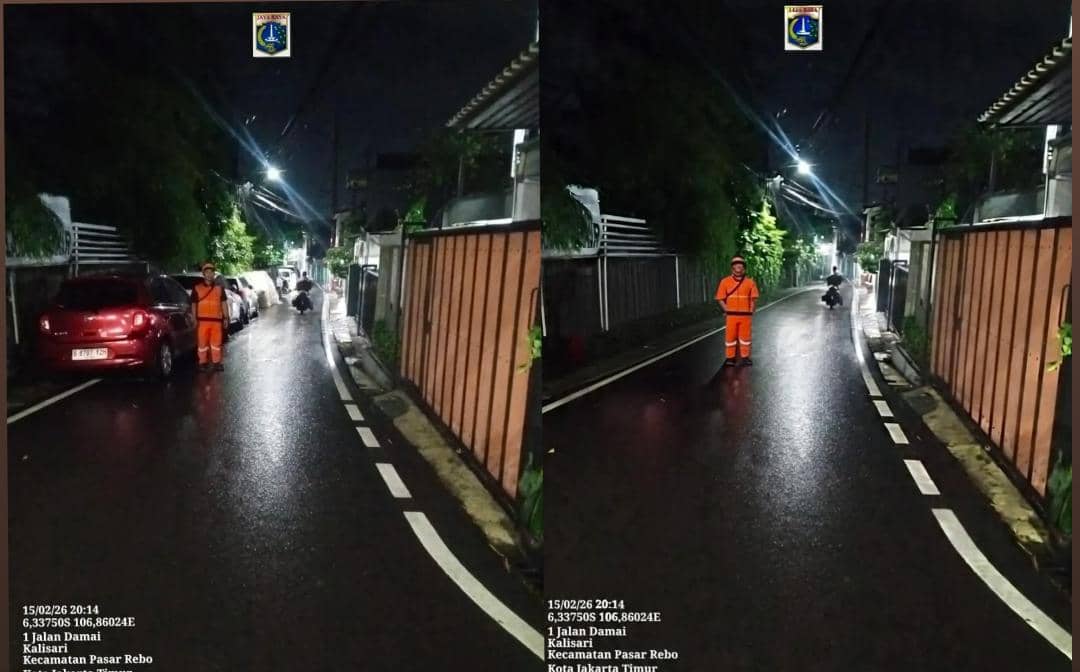

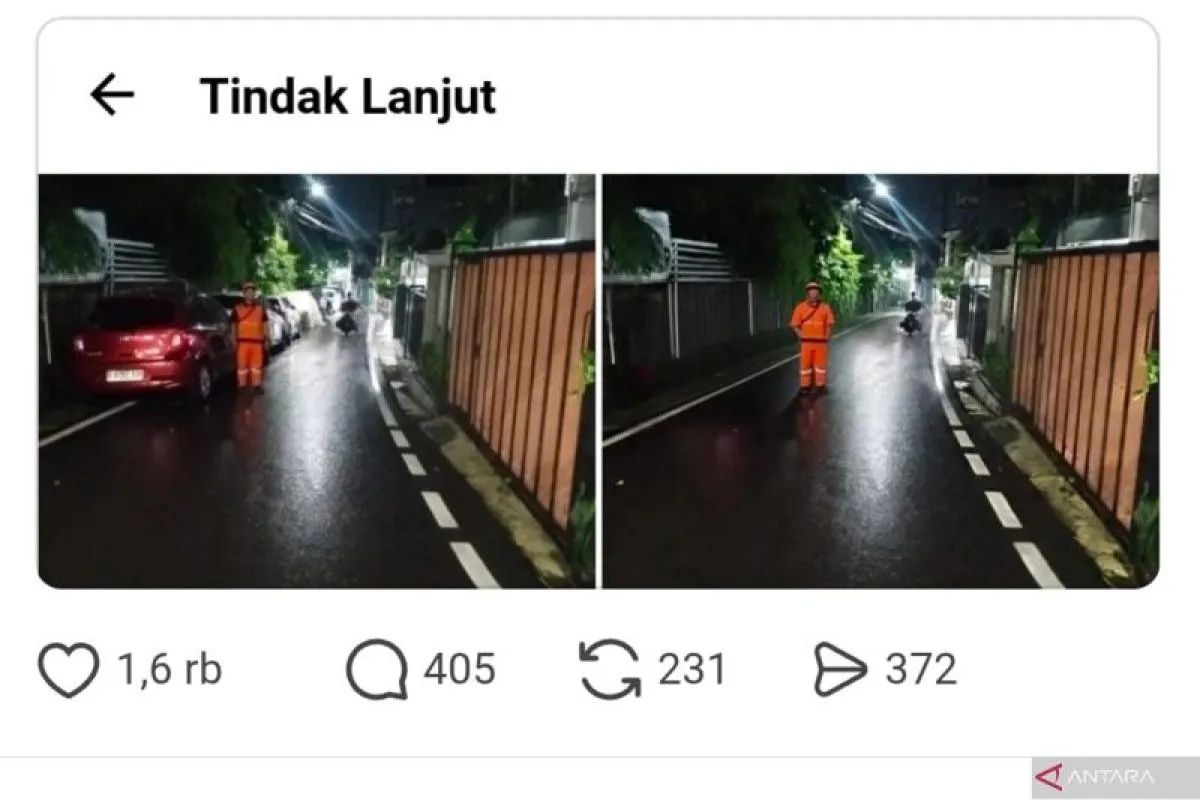

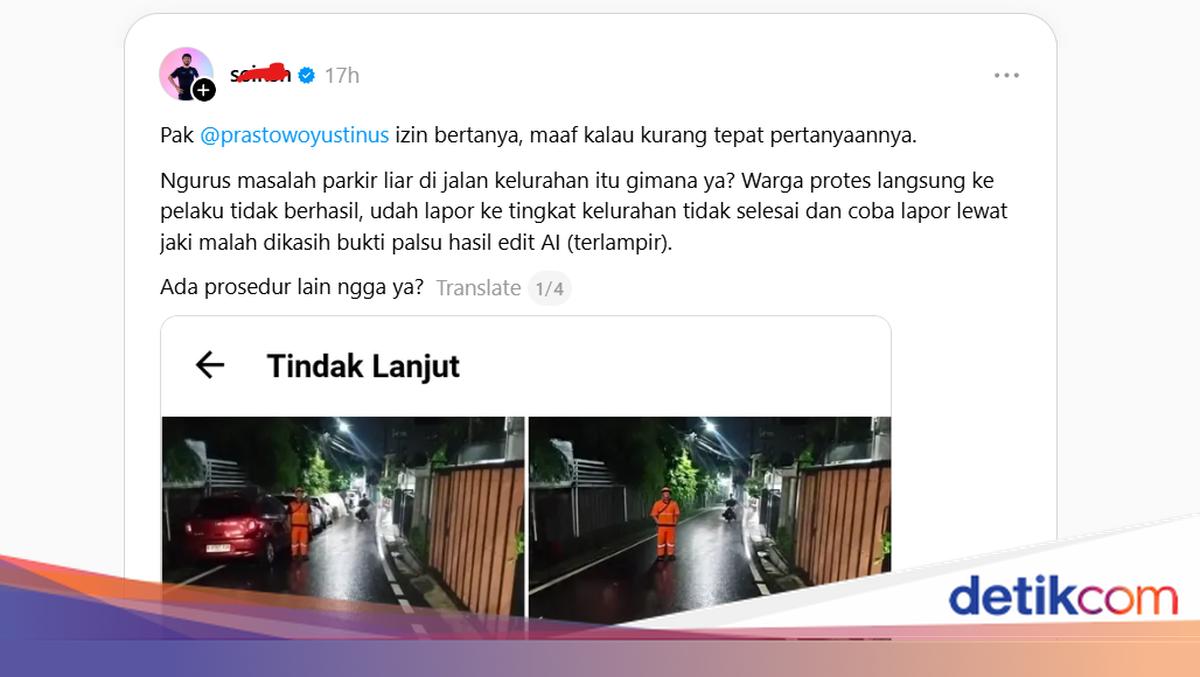

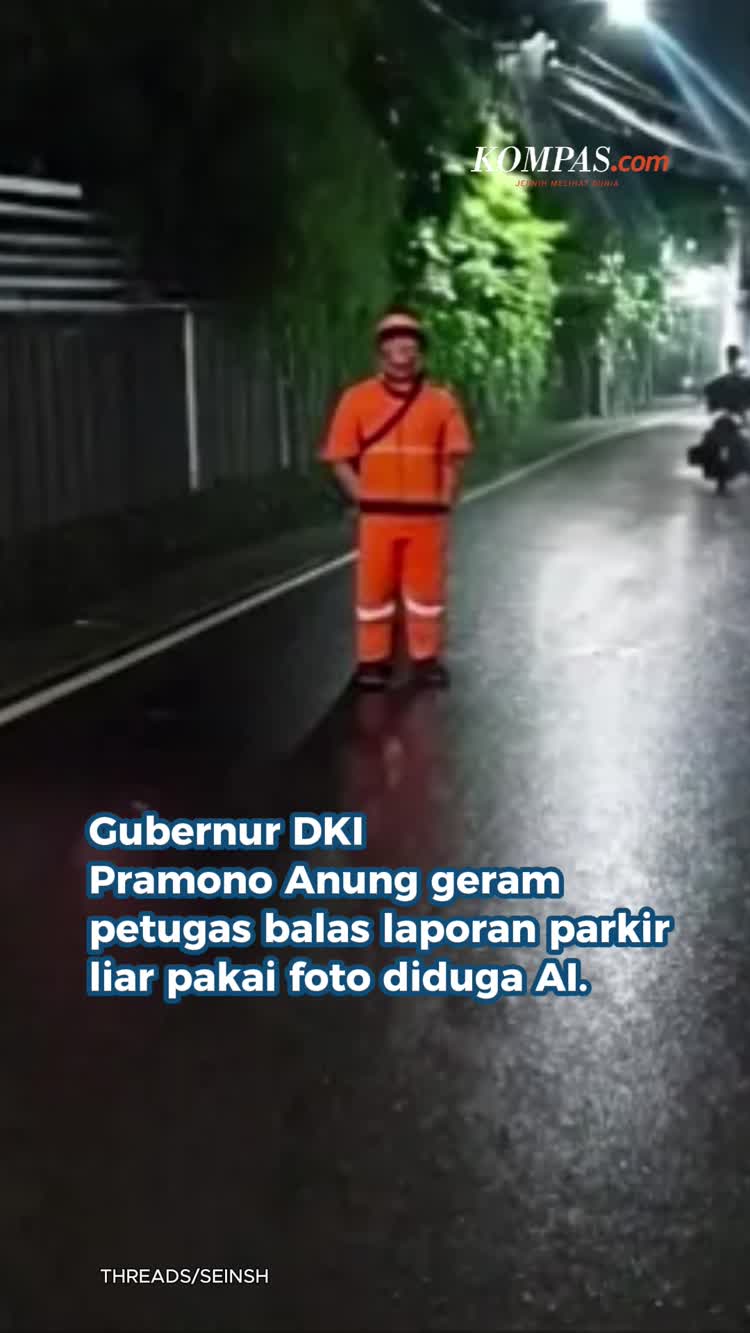

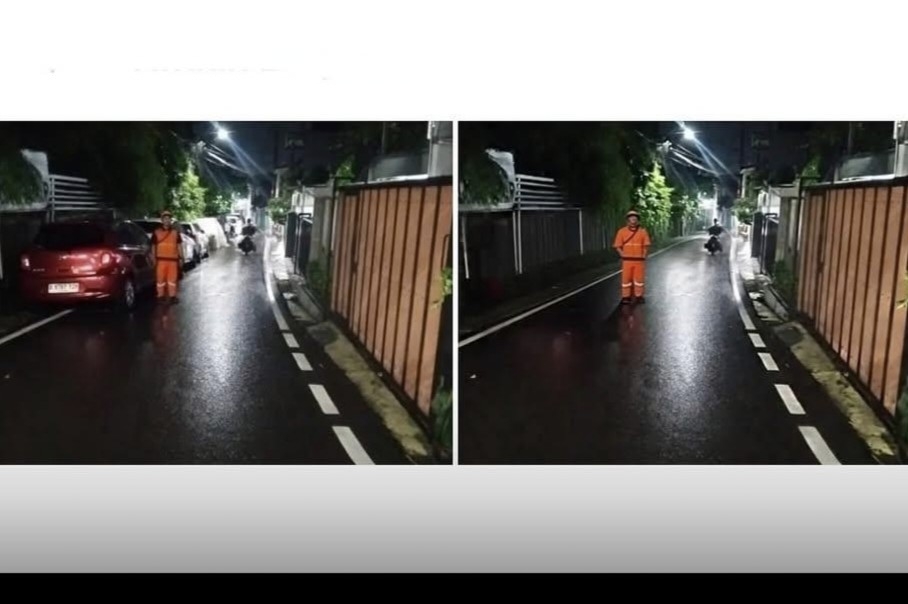

Jakarta public officials used AI-generated photos to falsely report the resolution of citizen complaints about illegal parking via the JAKI app. The incident led to disciplinary actions, public apologies, and an official investigation, highlighting the misuse of AI to deceive the public and undermine trust in government services.[AI generated]

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/Parkir-Liar-mks.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/PENCEGAHAN-BANJIR-Wali-Kota-Jakarta-Timur-Munjirin.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jatim/foto/bank/originals/Gubernur-DKI-Jakarta-soroti-petugas-yang-pakai-gambar-olahan-AI-untuk-menipu-warga.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5546973/original/058530200_1775397806-IMG_8615.jpeg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/Kepala-Diskominfotik-DKI-Jakarta-Budi-Awaluddin-1.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/parkir-warga-ai.jpg)

/data/photo/2026/04/05/69d25b229f966.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5283040/original/077089800_1752499734-JAKI_1.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5525214/original/014967700_1773034392-Pramono_Bantargebang.jpeg)

:strip_icc():format(jpeg)/kly-media-production/medias/5510973/original/097393100_1771850176-parkir_liar.jpeg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/Kevin-Wu-menilai-laporan-tindak-lanjut-palsu-petugas.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/Anggota-Komisi-A-DPRD-DKI-Jakarta-Kevin-Wu-meminta-Pemerintah-Provins.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/laporan-warga-direkayasa-pakai-AI.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/bogor/foto/bank/originals/Sosok-Lurah-Siti-Nurhasanah-Imbas-Tipu-Warga-Pakai-AI.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/pramono-foto-aii.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/laporan-parkir-liar-di-aplikasi-JAKI-rekayasa-AI-membuat-Gubernur-DKI-Jakarta-Pramono-Anung.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/Warga-Pasar-Rebo-melaporkan-soal-parkir-liar-melalui-aplikasi-JAKI.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/sumsel/foto/bank/originals/Berita-Viral-Kali-Sari1.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jatim/foto/bank/originals/Hukuman-Pemprov-DKI-untuk-Petugas-Lapangan-yang-Balas-Aduan-Warga-soal-Parkir-Liar-Pakai-Foto-AI.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jatim/foto/bank/originals/Harta-kekayaan-Lurah-Kalisari-Siti-Nurhasanah-laporan-palsu-foto-AI-di-aplikasi-JAKI.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/WFA-ASN-DKI-Gubernur-DKI-Jakarta-Pramono-Anung-di-Balai-Kota-Jakarta.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jatim/foto/bank/originals/petugas-PPSU-pakai-AI-bohongi-warga-yang-mengadi-akhirnya-disanki.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/bangka/foto/bank/originals/Lurah-Kalisari-Jakarta-Timur-Siti-Nurhasanah.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/KASUS-REKAYASA-FOTO-Kasus-viral-dugaan-laporan-palsu.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/Pramono-Anung-tanggapi-viralnya-laporan-warga.jpg)

/data/photo/2026/02/24/699d429ed95cb.jpeg)

/data/photo/2026/03/07/69abc2984520c.jpeg)

/data/photo/2026/04/06/69d3327c55722.jpg)

/data/photo/2026/04/06/69d31fc5c63ce.jpeg)

/data/photo/2026/04/06/69d3b787921f8.jpg)

/data/photo/2026/04/06/69d3327c55722.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5548574/original/052282200_1775544469-Pramono_AI.jpeg)

:quality(80)/https://cdn-dam.kompas.id/images/2026/04/06/02b970417ba393f0acf0408856d9170a-cropped_image.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/Munjirin-soal-Lurah-Kalisari.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jatim/foto/bank/originals/Siti-Nurhasanah-Lurah-Kalisari-dinonaktifkan-imbas-laporan-foto-AI-Rabu-842026.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/medan/foto/bank/originals/lurahdicopottttt.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/wartakota/foto/bank/originals/LURAH-KALISARI-DINONAKTIFKAN.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/VIRAL-DI-MEDSOS-Seorang-warga-melalui-media-sosial-Threads-1234.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jakarta/foto/bank/originals/Camat-Pasar-Rebo-Mujiono-saat-memberi-keterangan-terkait-proses-perekrutan.jpg)

/data/photo/2026/04/07/69d4a38122973.jpg)

/data/photo/2026/04/08/69d65800b3948.jpg)

/data/photo/2026/04/05/69d25b229f966.jpg)

/data/photo/2026/04/01/69cc7dd276bf9.jpeg)