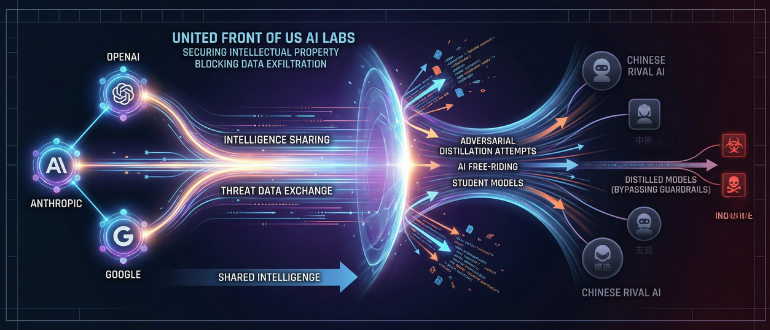

The article explicitly involves AI systems (large language models) and discusses their unauthorized use through automated scraping and distillation, which is a misuse of AI technology. The harm described is economic loss and national security risk due to intellectual property theft, which relates to violation of intellectual property rights. However, the article does not report that these harms have directly materialized in a way that constitutes an AI Incident (e.g., no direct harm to persons, communities, or critical infrastructure is described). Instead, it focuses on the companies' coordinated response, threat intelligence sharing, and preventive measures. This aligns with the definition of Complementary Information, which includes governance and societal responses to AI risks. There is no indication of plausible future harm beyond what is already being mitigated, so it is not an AI Hazard. Hence, the classification is Complementary Information.

)