The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

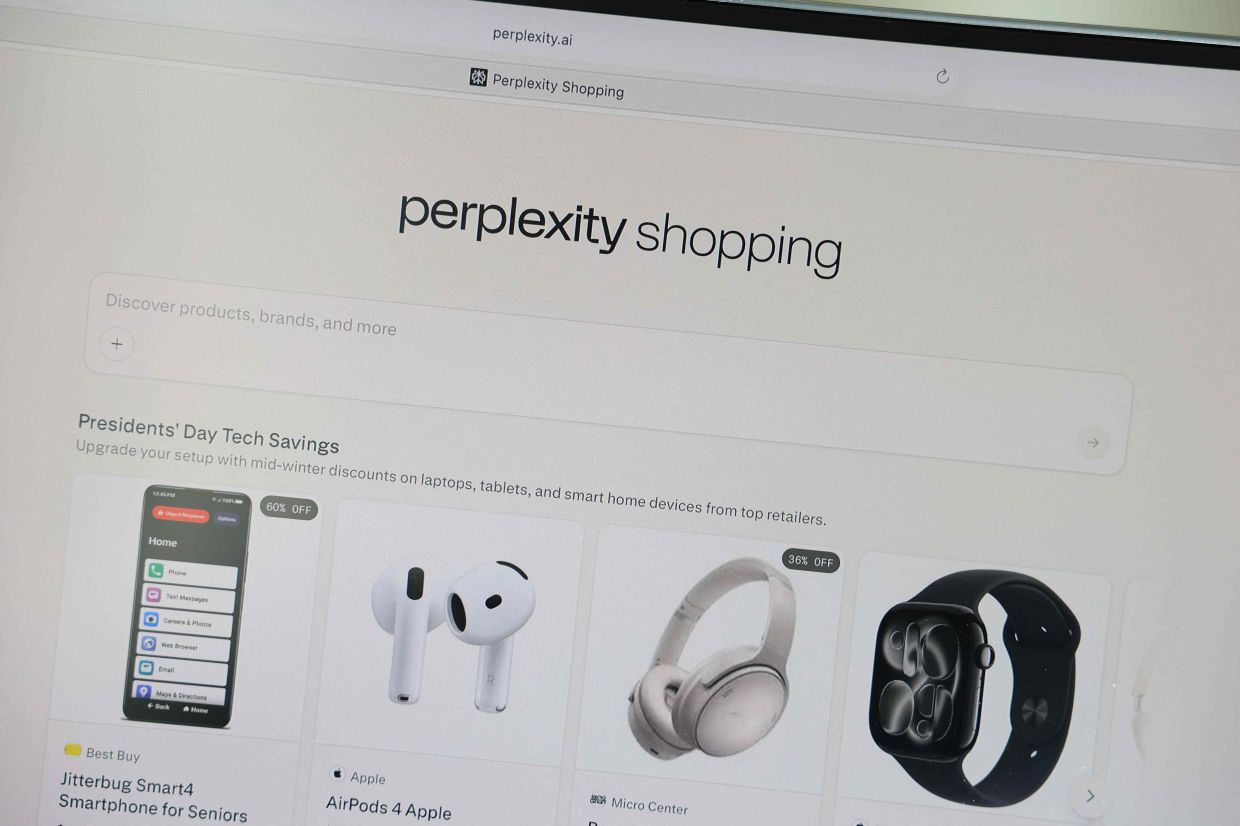

A class-action lawsuit in the United States alleges that Perplexity AI secretly shared users' conversational data, including sensitive information, with Meta and Google via embedded tracking technologies, even in incognito mode. The AI system's practices reportedly violated user privacy and data protection rights by transmitting data without consent.[AI generated]

)