The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

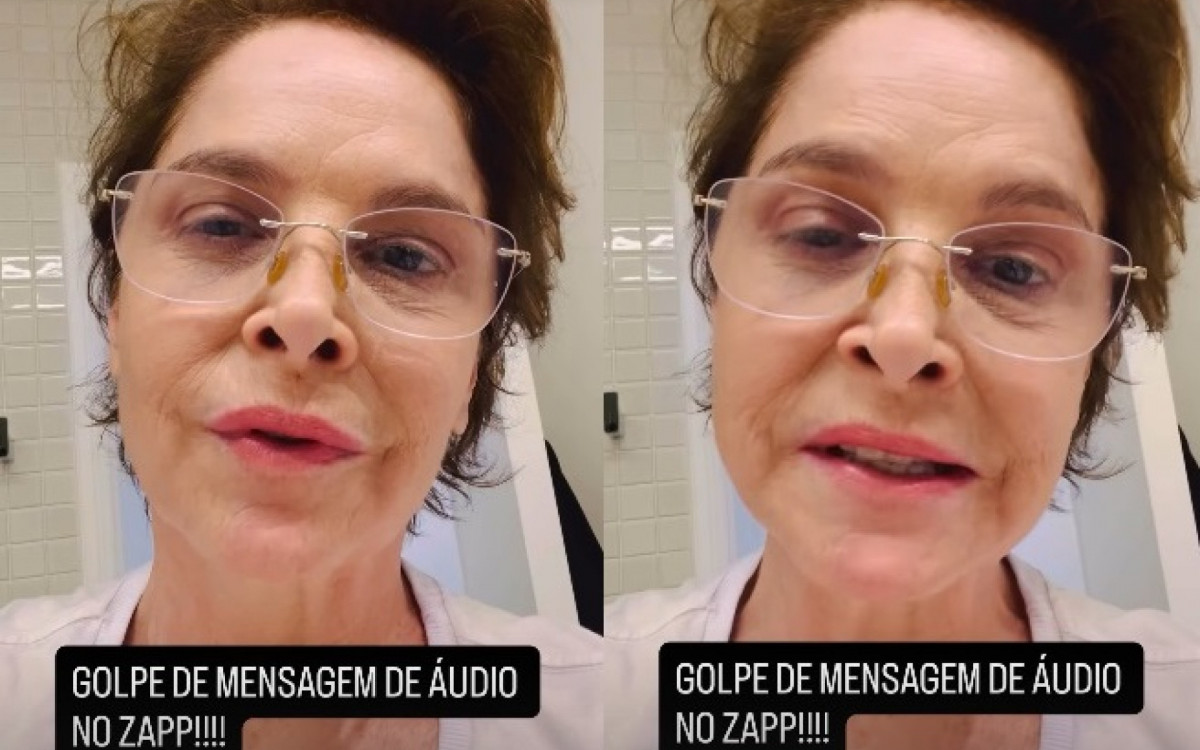

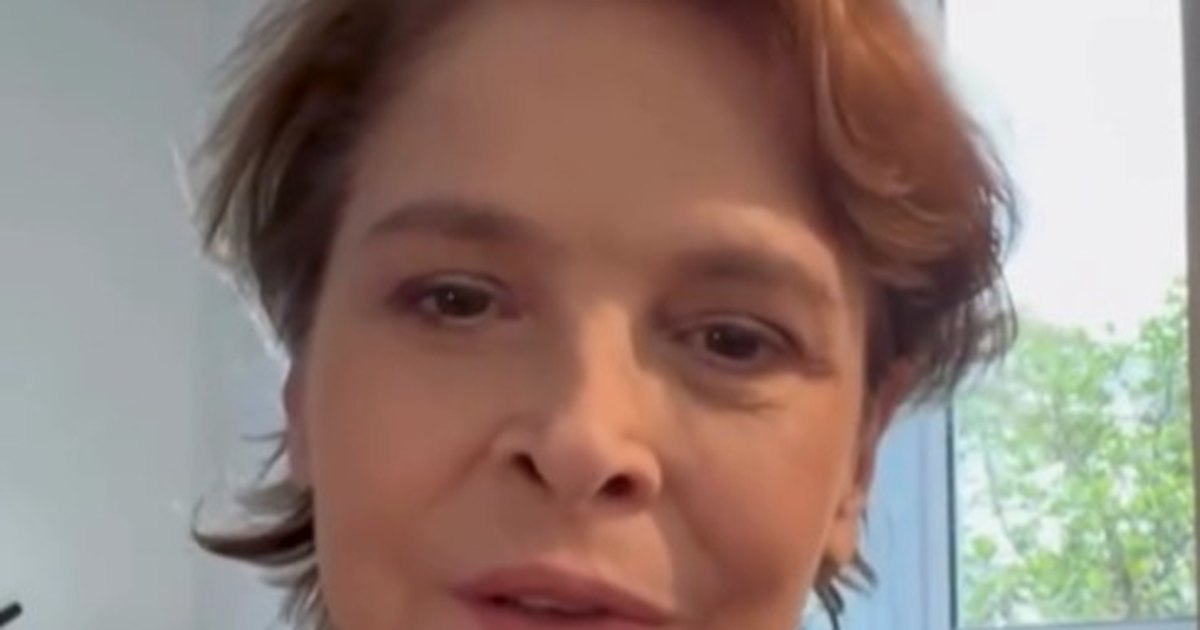

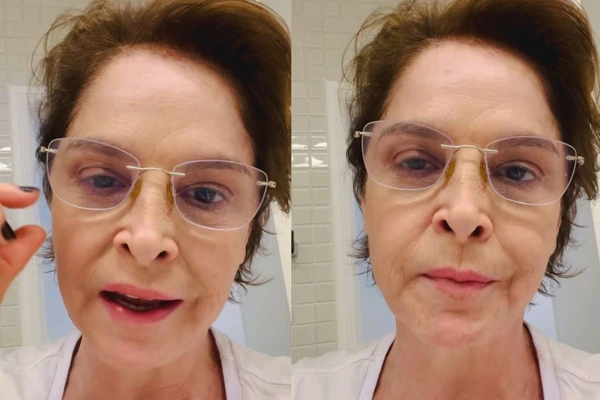

Criminals cloned Brazilian actress Drica Moraes' phone and used AI to generate fake voice messages, impersonating her to scam her contacts via WhatsApp. The AI-enabled impersonation led to fraudulent requests for money and personal information, prompting Moraes to publicly warn her followers about the ongoing scam.[AI generated]

/https://i.s3.glbimg.com/v1/AUTH_51f0194726ca4cae994c33379977582d/internal_photos/bs/2026/j/0/rbBnNDSuegzvYOp0EYPA/design-sem-nome-2026-04-06t144539.313.jpg)

/https://i.s3.glbimg.com/v1/AUTH_1f551ea7087a47f39ead75f64041559a/internal_photos/bs/2026/c/a/ejOtTlQ6m0CXRCw83XEw/arte-2026-04-06t184344.265.png)