The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

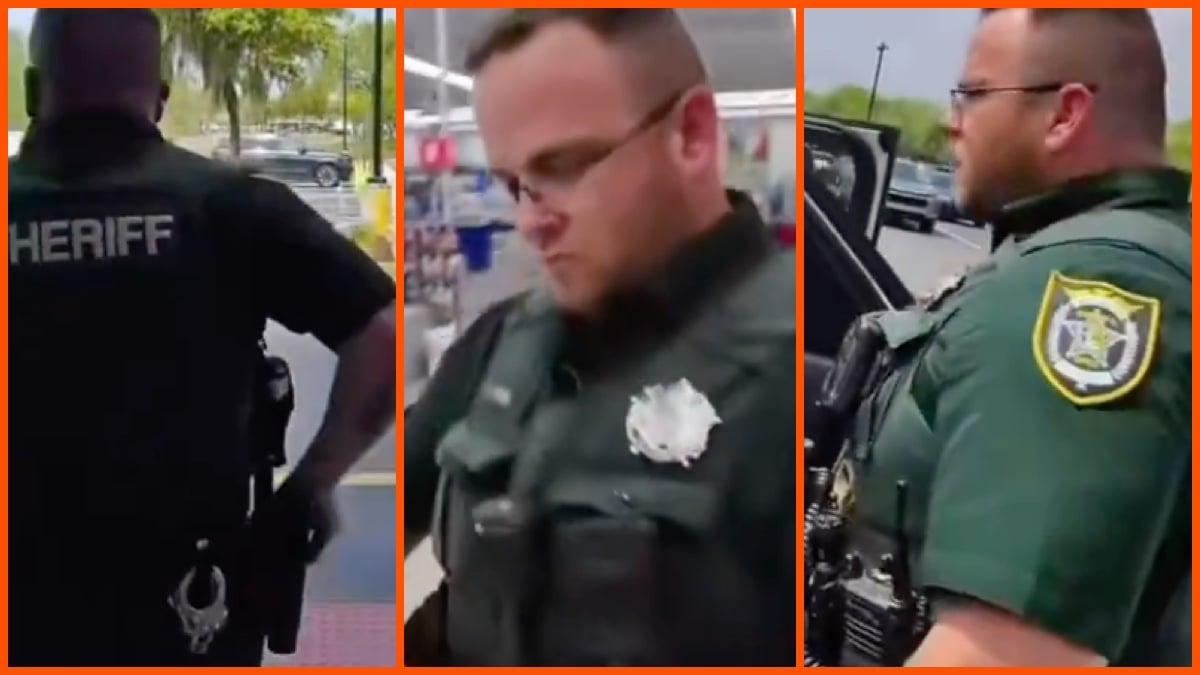

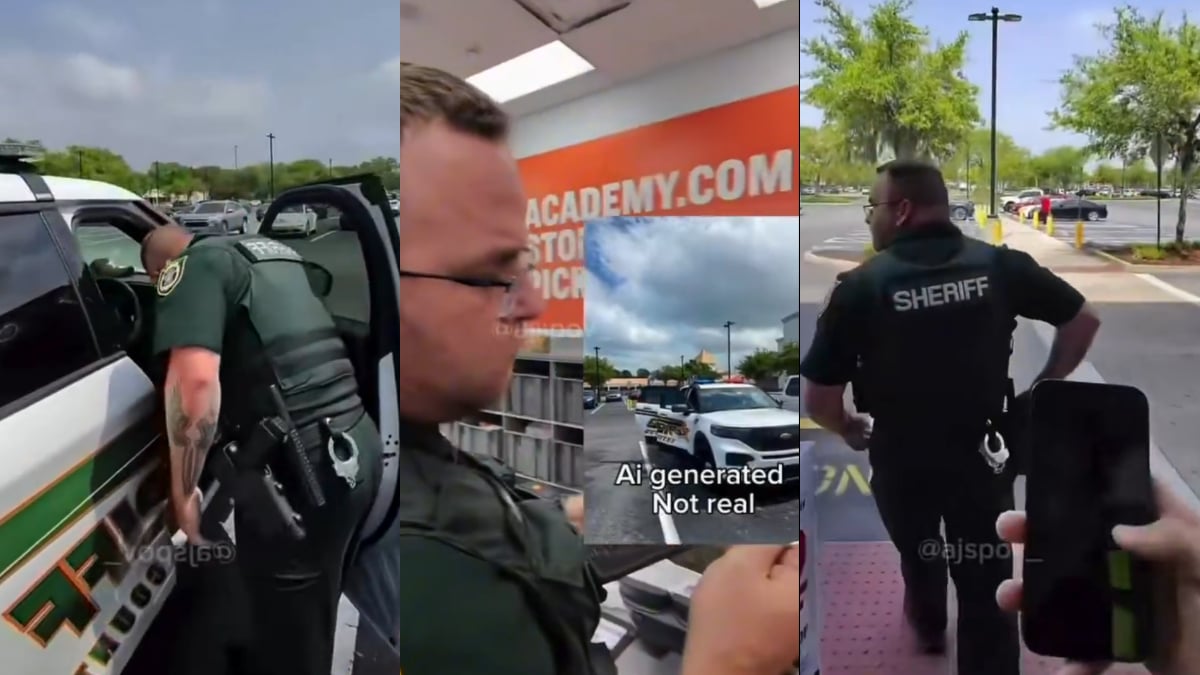

Alexis Martínez-Arizala, from Florida, was arrested after creating and using an AI-generated deepfake video to falsely report a crime to law enforcement. The video depicted two Black men breaking into a police car, misleading officers and wasting resources. He was apprehended in Puerto Rico and faces multiple charges.[AI generated]