The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

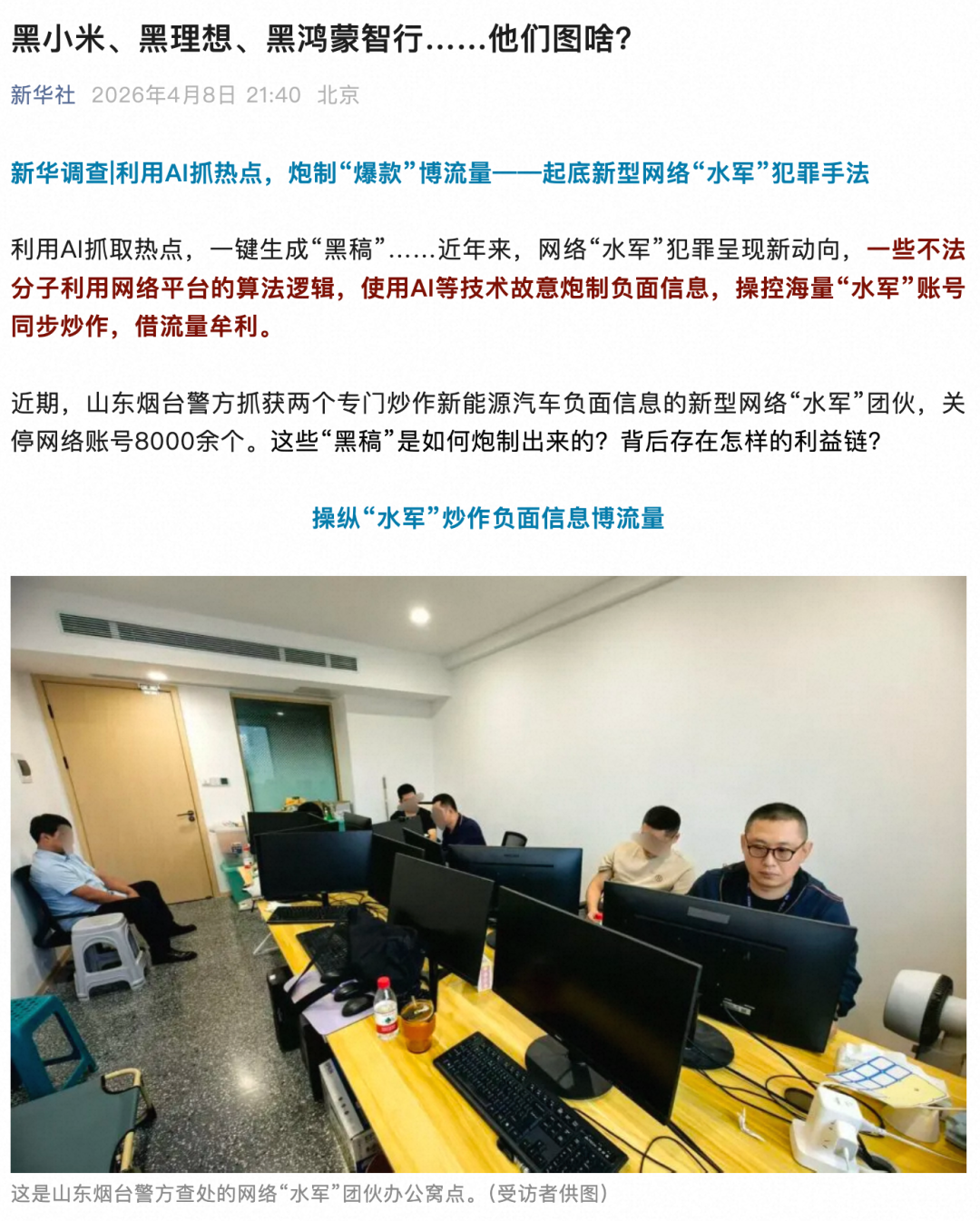

In Shanghai, two individuals used AI tools to rapidly generate and disseminate false articles and images about car companies like Xiaomi, NIO, and Volvo, causing reputational and economic harm. They managed thousands of social media accounts, publishing 700,000 posts for profit before being arrested and charged with illegal business operations.[AI generated]