The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

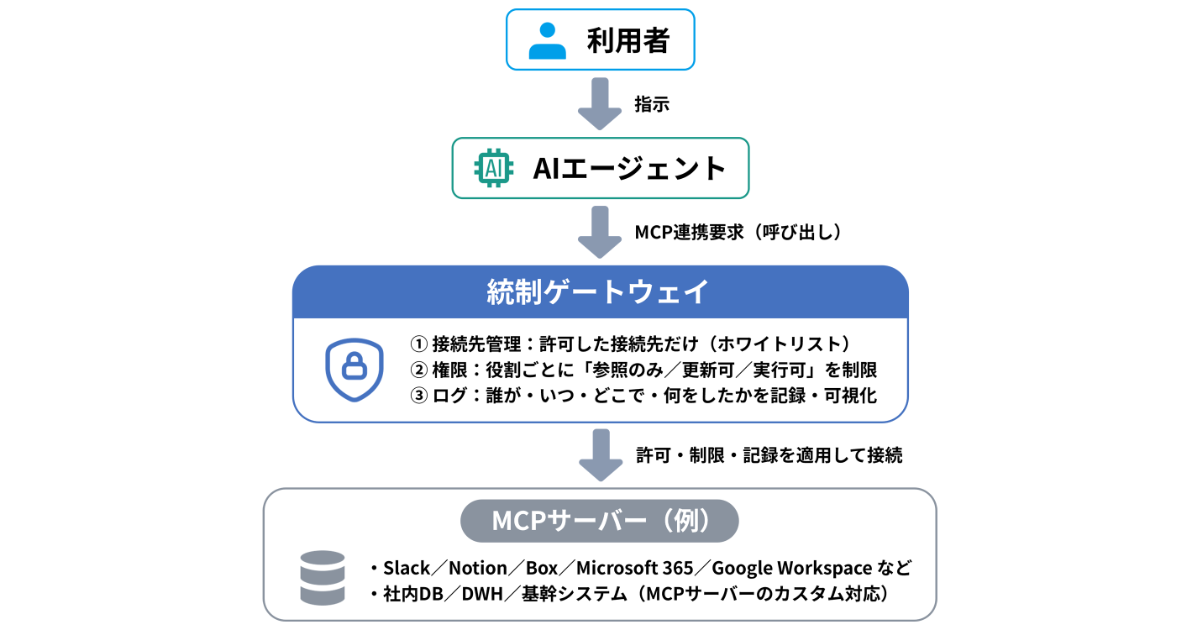

Gartner predicts that by 2028, 25% of enterprise generative AI applications will experience at least five minor security incidents annually, up from 9% in 2025, due to increased use of agent-based AI and Model Context Protocol (MCP). Risks include information leaks and inadequate security controls, prompting calls for stricter safeguards.[AI generated]