The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

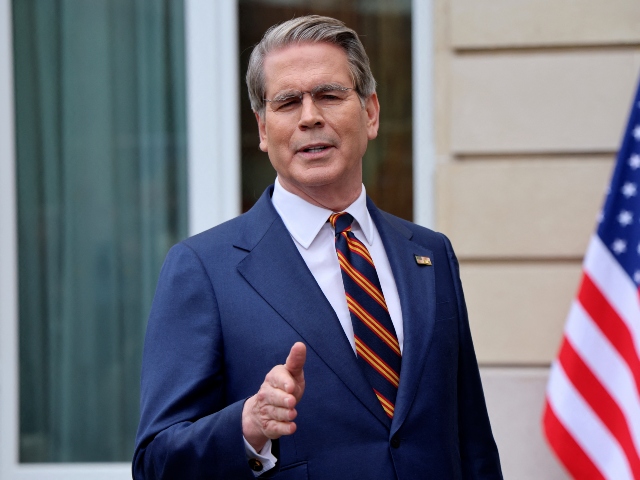

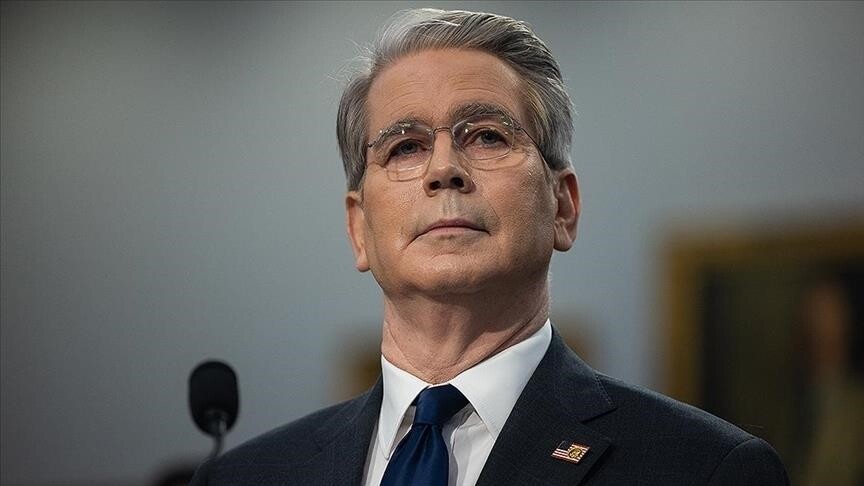

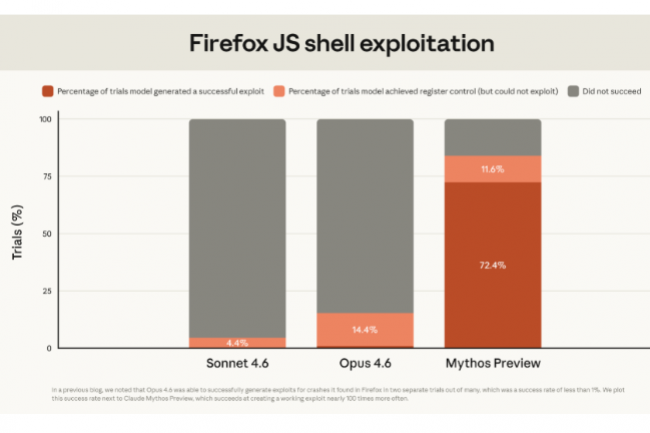

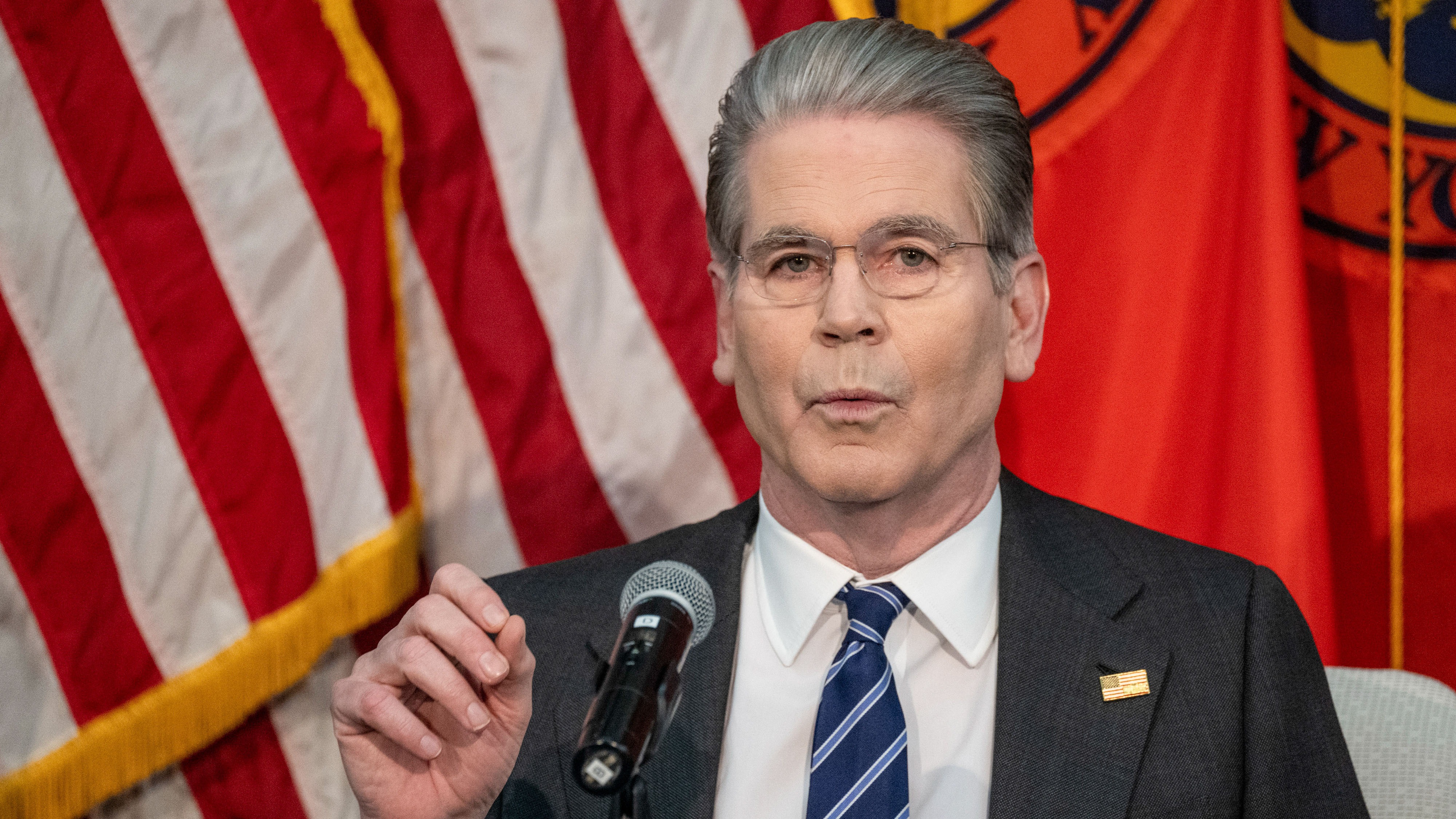

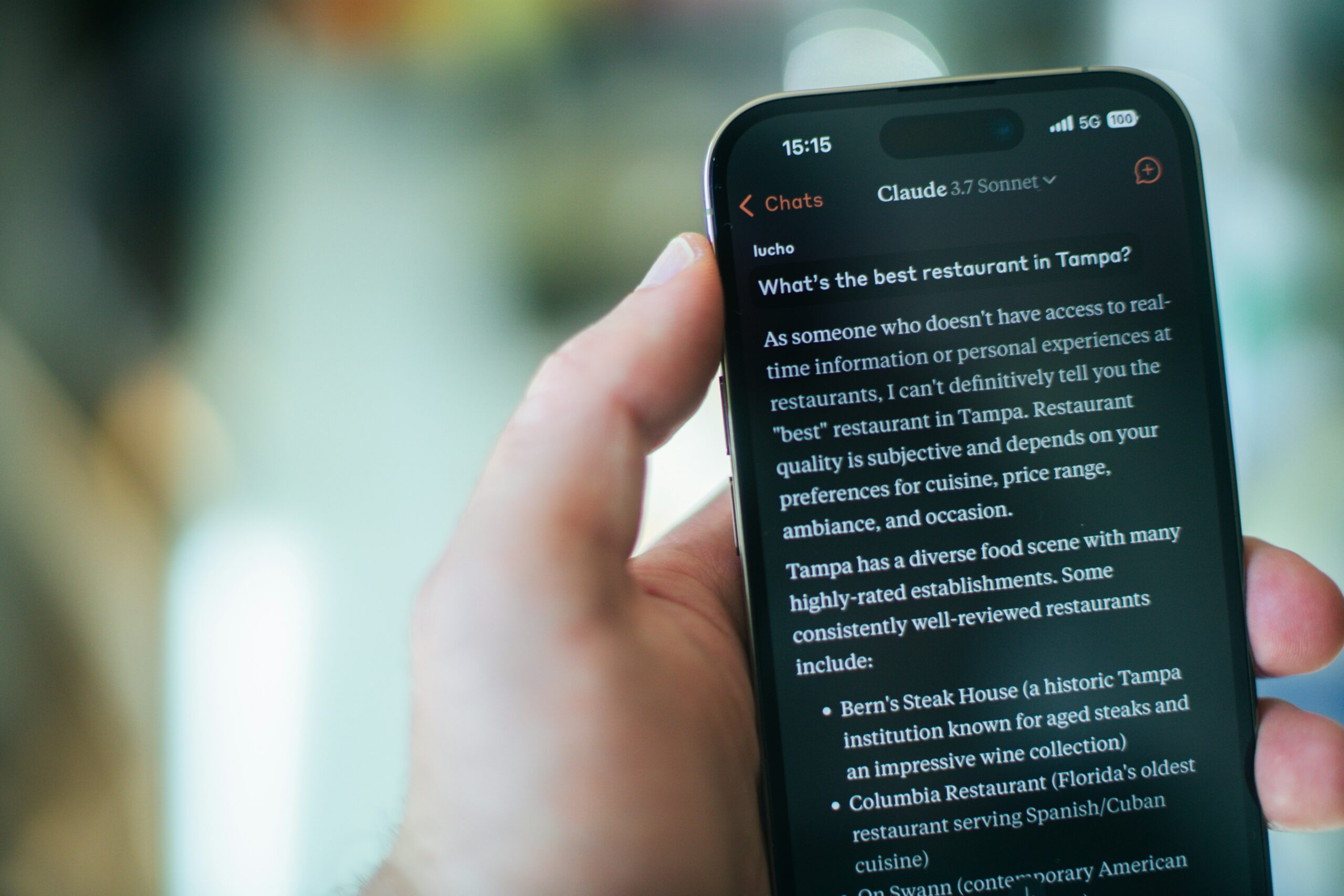

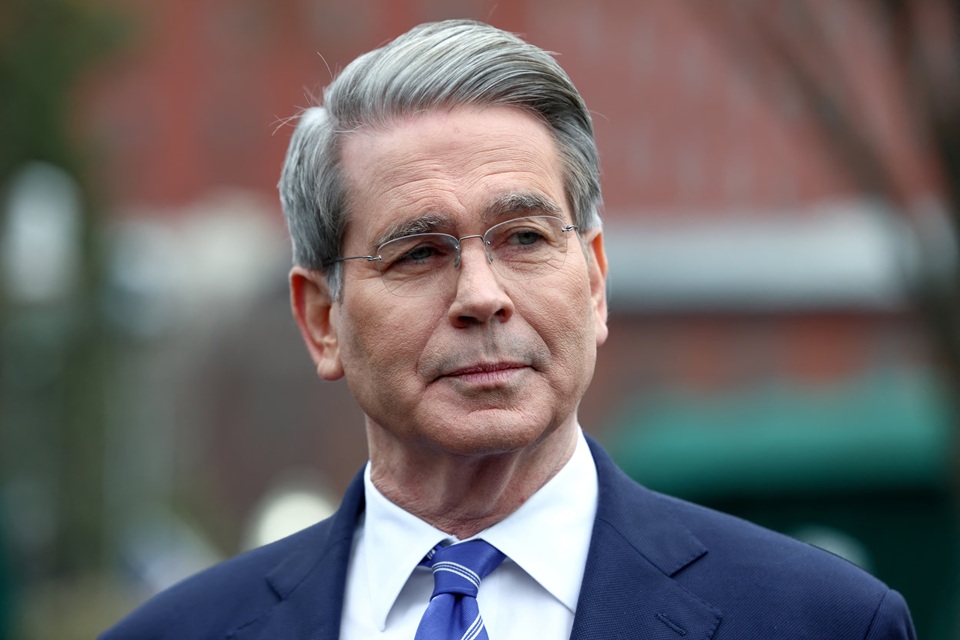

US Treasury Secretary Scott Besant and Federal Reserve Chair Jerome Powell convened an emergency meeting with major bank CEOs in Washington to address concerns that Anthropic's new AI model, Mythos, could enable advanced cyberattacks on financial institutions. Authorities urged banks to strengthen cybersecurity in response to the AI system's potential risks.[AI generated]

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2025/J/X/GF93B1SUOwvUqXXzjAfQ/439143146.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/fc7/1c1/369/fc71c136907a202fad40799a5acf11ad.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/9fb/376/f97/9fb376f9729196f7020c4ab0beaa053b.jpg)

,regionOfInterest=(2632,964)&hash=f4edca45477bd3ff76eb55ea47672fd15a20a658e145fbe30165c66dcfa204f8)

)

)

:strip_icc()/i.s3.glbimg.com/v1/AUTH_63b422c2caee4269b8b34177e8876b93/internal_photos/bs/2019/A/o/QFIYyfS0GpyVX7Zs3xvw/foto28rel-101-ping-f4.jpg)

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2026/2/7/xW8np3T2GrLBq7caPgHA/445955830.jpg)

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2026/J/D/9oOUsuQlGEyurBv73AMg/antropic-bolss-bloomber.jpg)

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2026/t/4/exMAbHSHmUJ5fTVOc5PA/anthropic-ia-bloomberg.jpg)

:strip_icc()/i.s3.glbimg.com/v1/AUTH_63b422c2caee4269b8b34177e8876b93/internal_photos/bs/2026/3/W/xV09qUQ8mVicSHSDEAQA/449665231.jpg)

)

/file/attachments/orphans/flyd-mT7lXZPjk7U-unsplash-small_369564.jpg)