The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

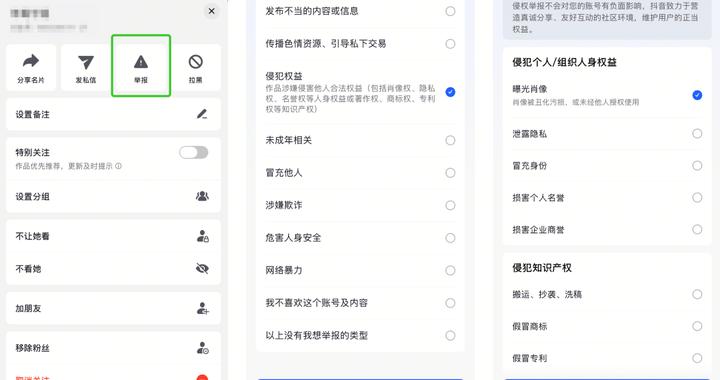

Major Chinese platforms like WeChat, Douyin, and Toutiao have intensified enforcement against AI-generated content that replaces human creators, citing issues such as misinformation, copyright infringement, and low-quality material. Actions include mass content deletions, account bans, and updated policies to mitigate ongoing harms from automated AI content production.[AI generated]