The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

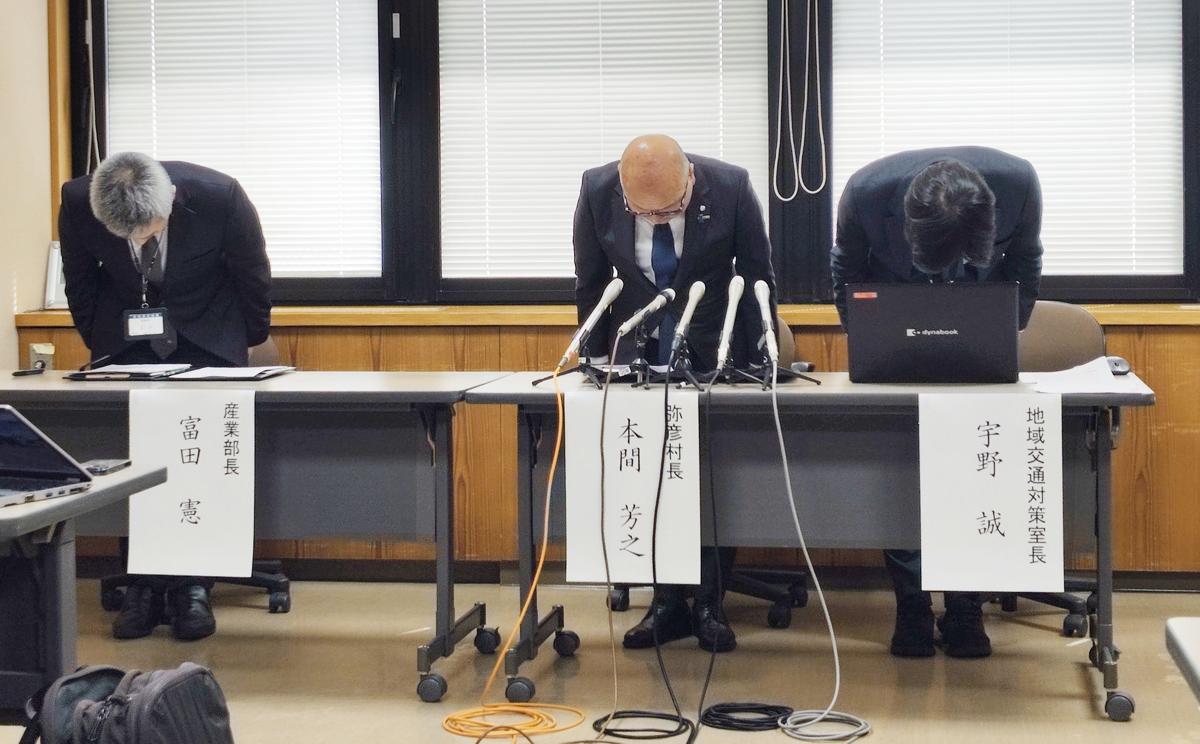

An autonomous bus in Yahiko, Japan, struck two pedestrians after switching from AI to manual operation when the AI detected people ahead. The incident, attributed to possible human error during manual driving, resulted in injuries and led to the suspension of the bus service for investigation.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event describes an accident involving an autonomous bus (an AI system) that was being manually operated at the time of the accident. Two pedestrians were injured, which is harm to persons. Although the accident was caused by human operator error rather than an AI malfunction, the AI system's presence and operation context are central to the incident. The bus is an AI system, and the incident occurred during its operation, leading to direct harm. Therefore, this qualifies as an AI Incident under the definition, as the AI system's use indirectly led to harm through human error during manual operation.[AI generated]