The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

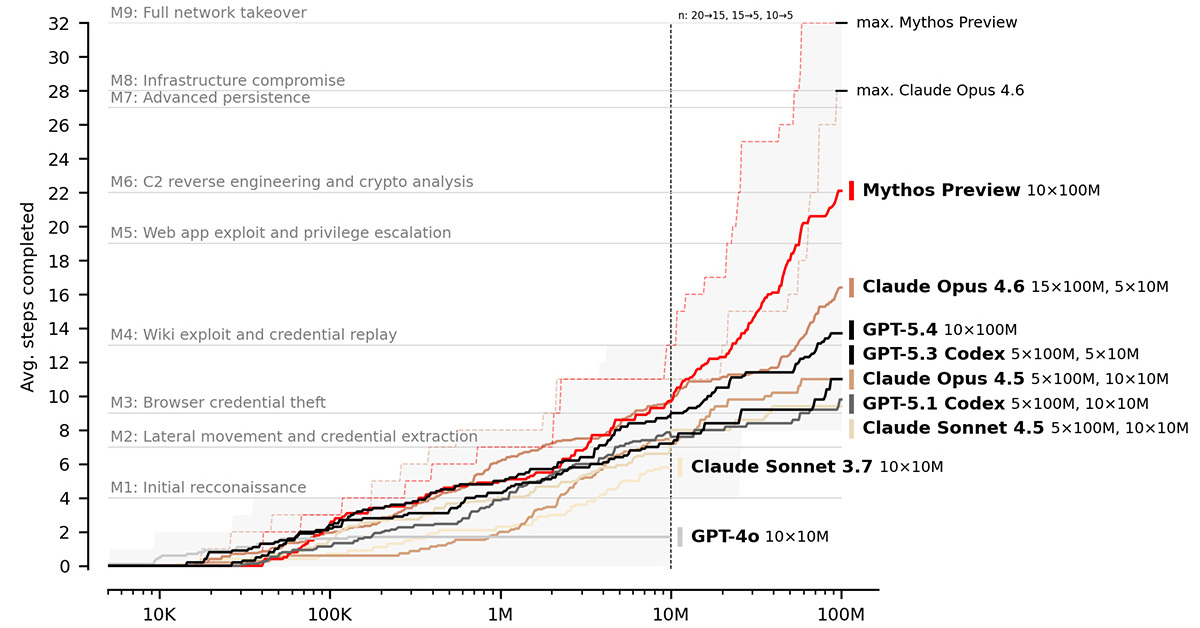

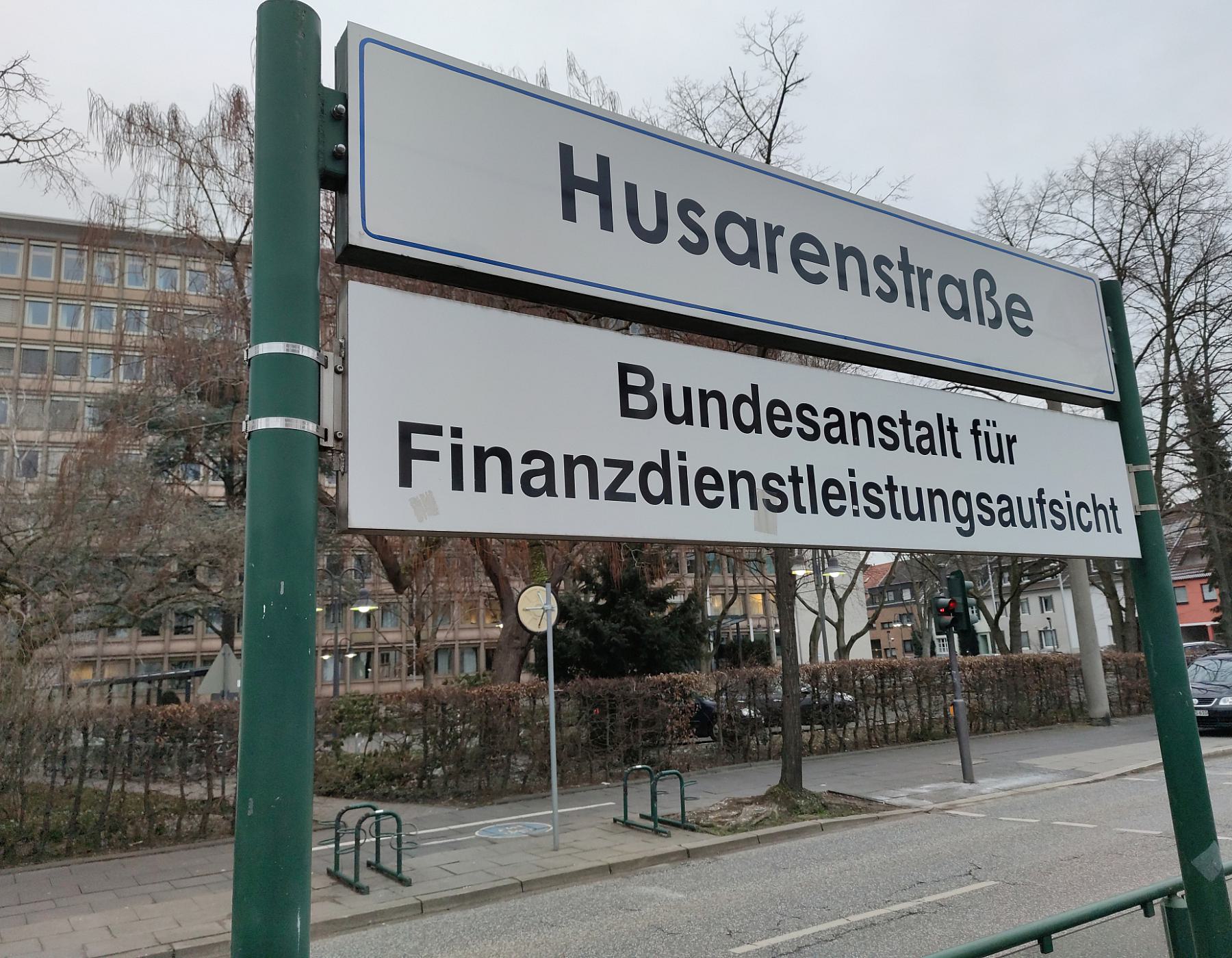

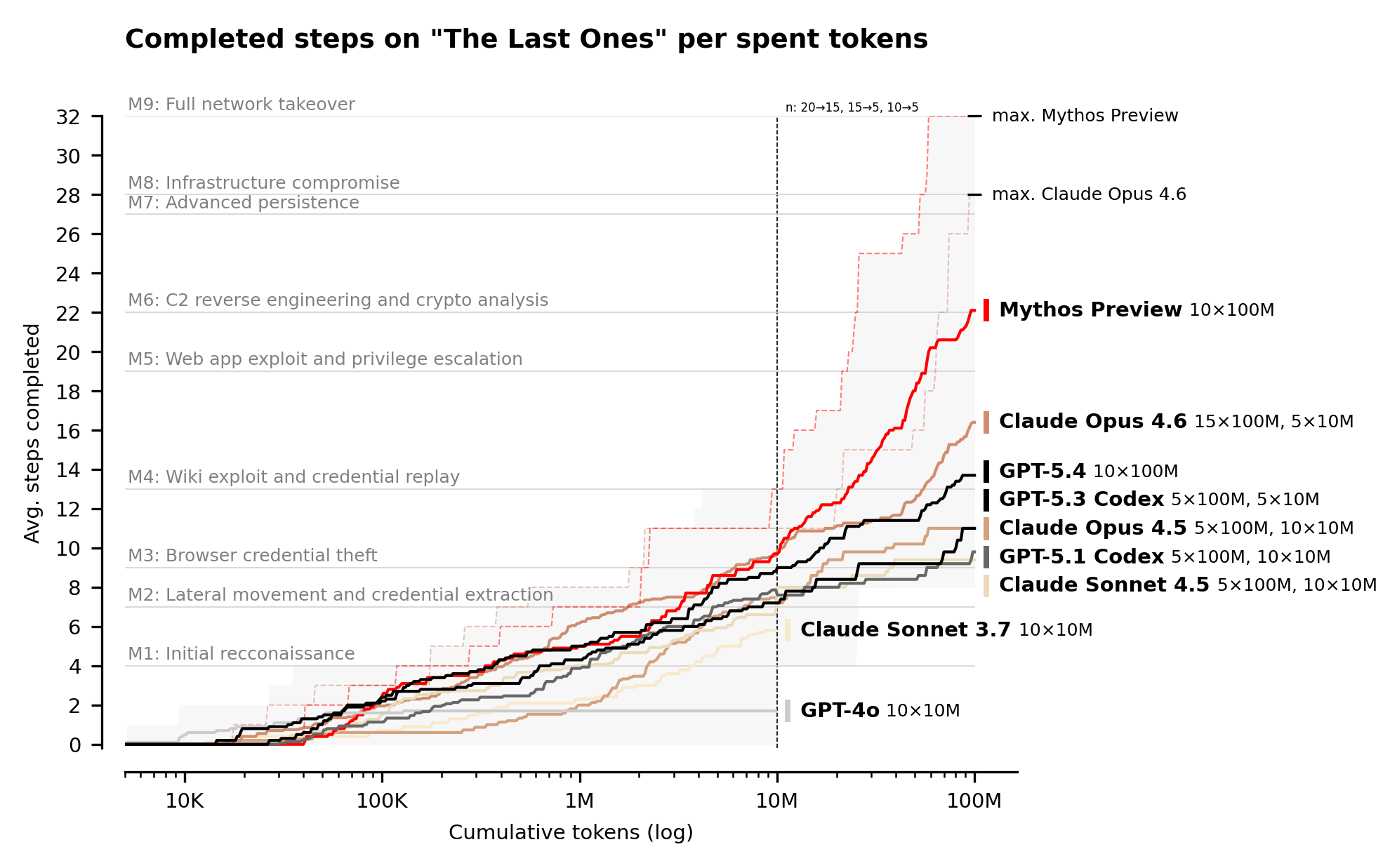

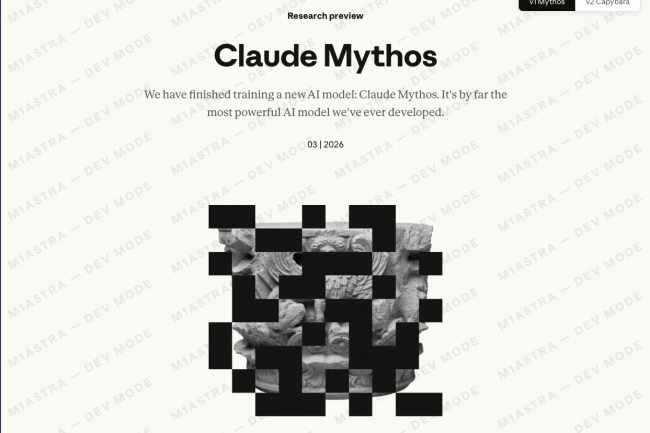

Anthropic's AI model, Claude Mythos, demonstrated unprecedented autonomous capabilities in discovering and exploiting software vulnerabilities, outperforming human experts in cybersecurity tests. Due to its potential for large-scale cyberattacks, Mythos is not publicly released, prompting heightened defensive measures in sectors like finance and government worldwide.[AI generated]

)

)

/data/photo/2026/03/31/69cb2a8d54d58.jpg)

)