The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

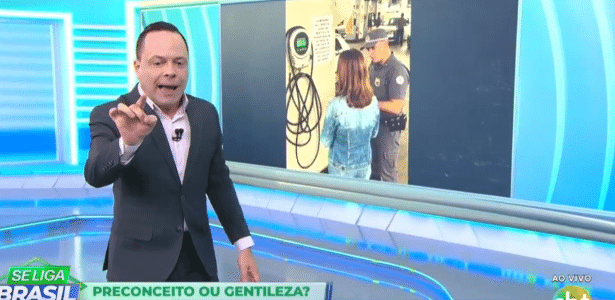

Brazilian broadcaster SBT's program 'Se Liga Brasil' aired a fake image generated by AI, presenting it as real news about alleged misogyny at a São Paulo gas station. The misinformation led to public debate and criticism. SBT admitted the error, citing a breach of journalistic standards, and implemented internal corrective measures.[AI generated]