The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

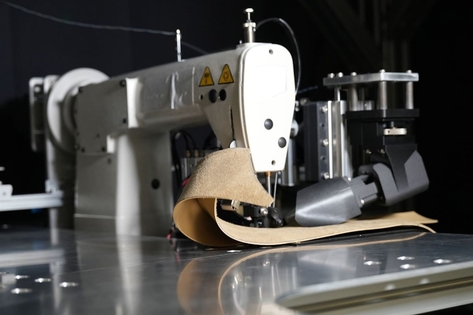

Viral videos show Indian garment factory workers wearing head-mounted cameras, reportedly to record their tasks for training AI systems or robots. This has sparked widespread concern about potential job losses, worker consent, and the ethical implications of using AI to automate skilled labor, though no actual harm has yet occurred.[AI generated]

)