The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

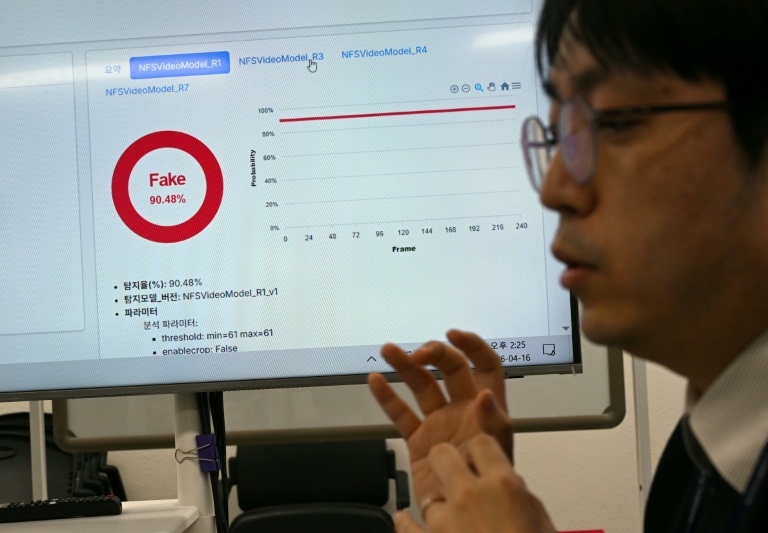

Ahead of the June 3 local elections, South Korea's prosecution, led by acting Prosecutor General Koo Ja-hyun, is mobilizing 600 investigators to strictly address AI-generated fake news and black propaganda. The crackdown targets AI-driven misinformation, including deepfakes, to protect election integrity and public trust.[AI generated]