The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

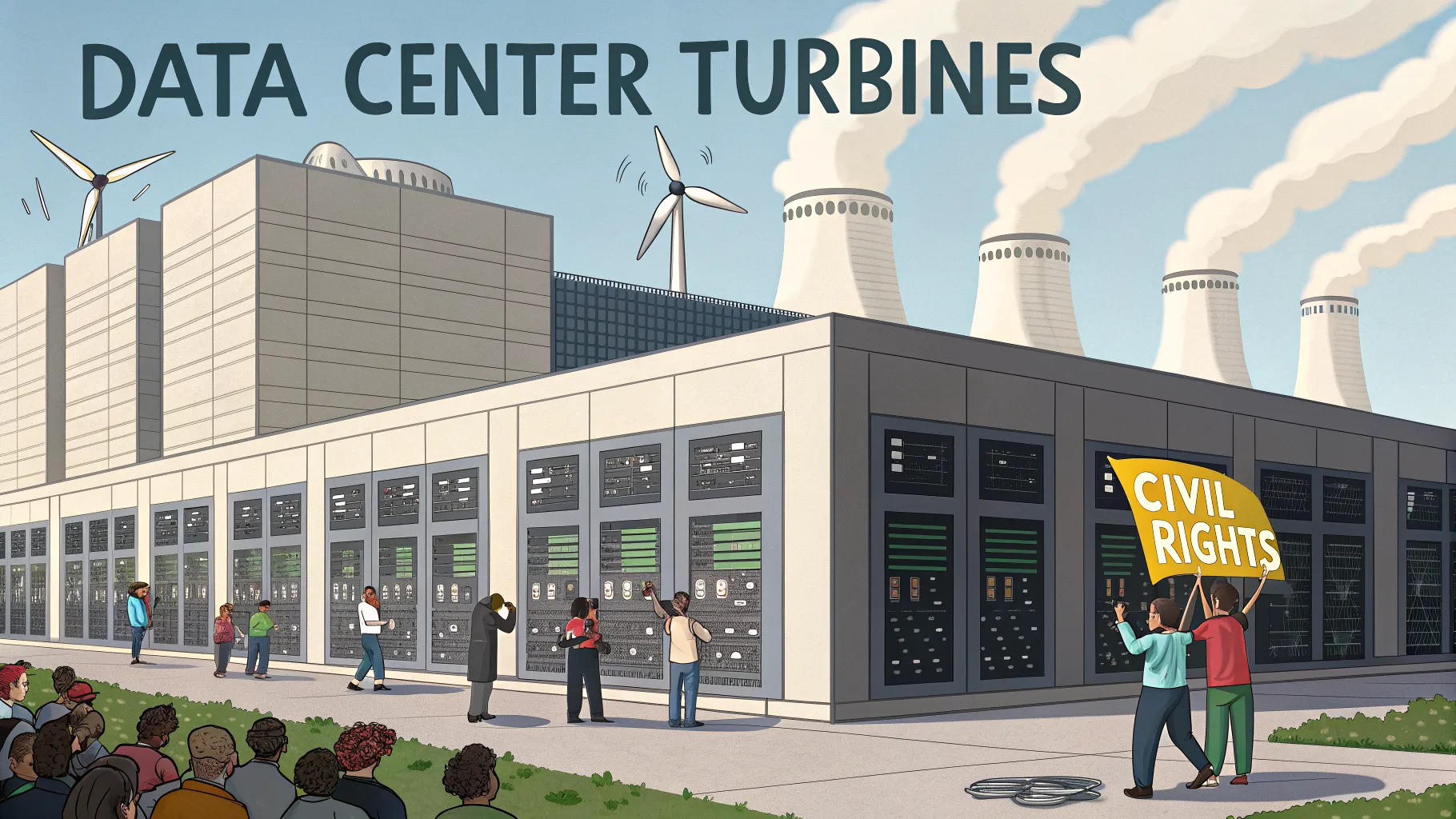

The NAACP has sued Elon Musk's xAI and its subsidiary MZX Tech, alleging they illegally operated 27 gas turbines without permits to power a data center supporting the Grok AI chatbot in Mississippi. The lawsuit claims this caused harmful pollution, violating the Clean Air Act and endangering local communities' health.[AI generated]

)