The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

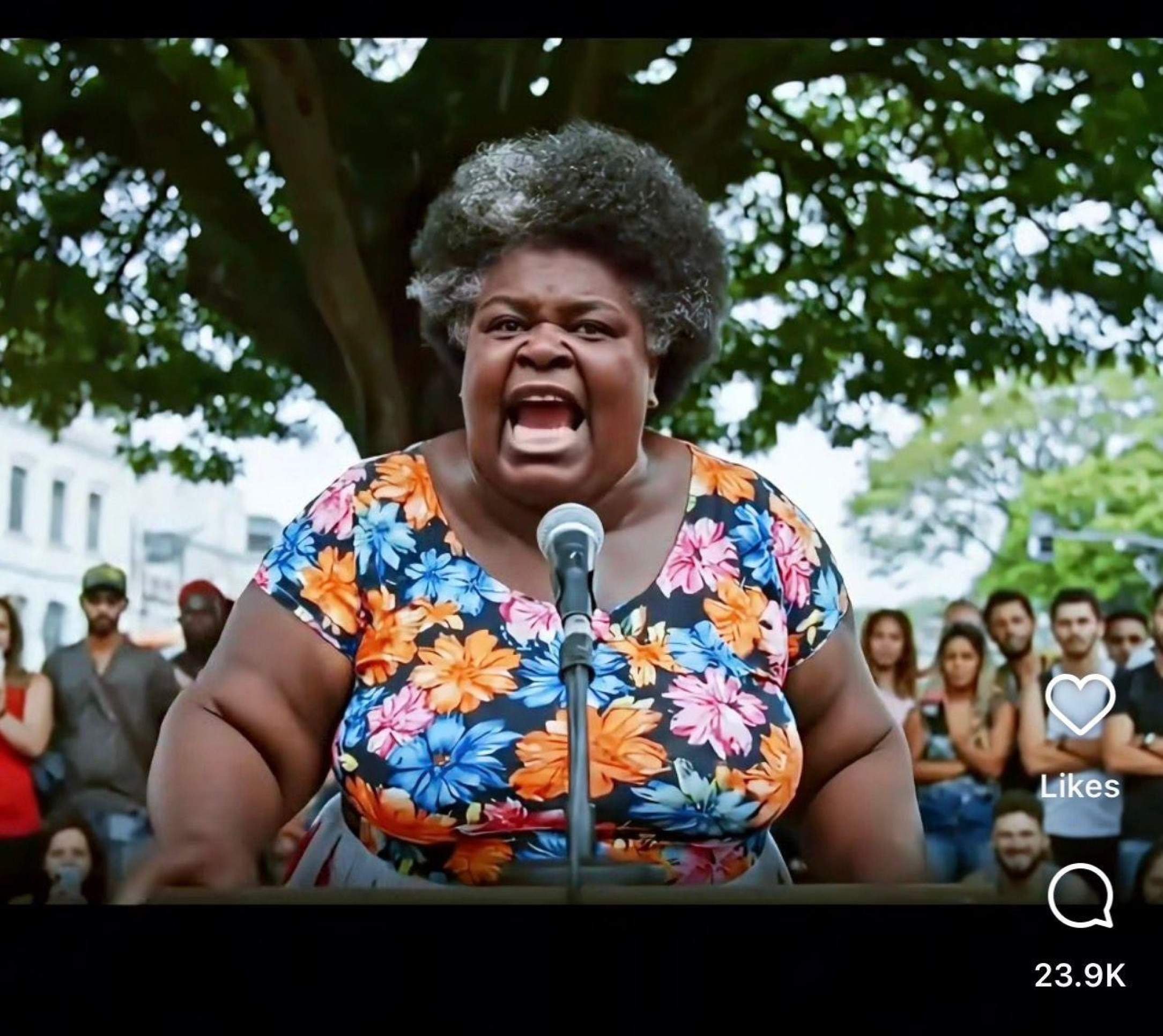

An AI-generated digital influencer, 'Dona Maria,' created using Google's Gemini, went viral in Brazil by posting aggressive, politically charged content criticizing President Lula and the Supreme Court. The AI avatar's widespread reach and influence raised concerns about manipulation of public opinion, electoral integrity, and potential violations of election laws.[AI generated]