The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

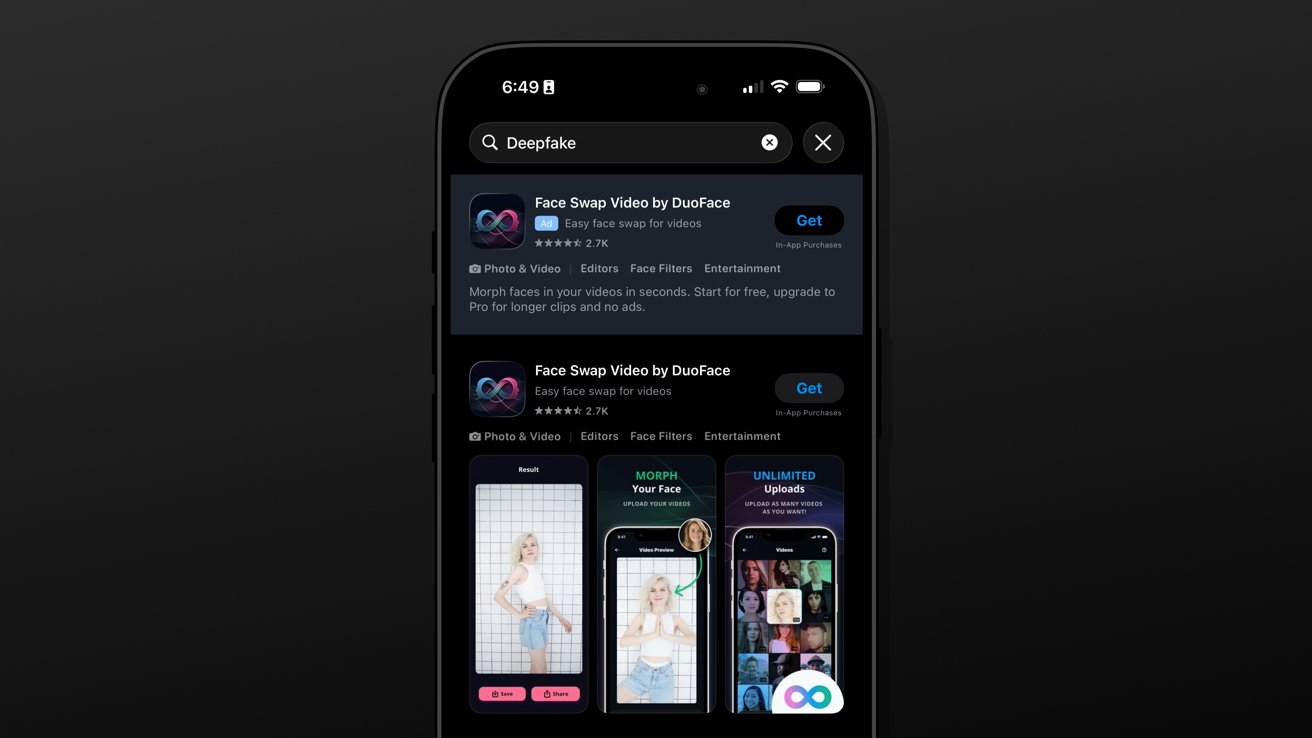

Apple and Google are under scrutiny after reports revealed their app stores host and promote AI-powered 'nudify' apps that generate nonconsensual sexualized images, violating privacy and human rights. Despite policies prohibiting such content, enforcement gaps allowed millions of downloads and significant revenue, exposing users to harm.[AI generated]