The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

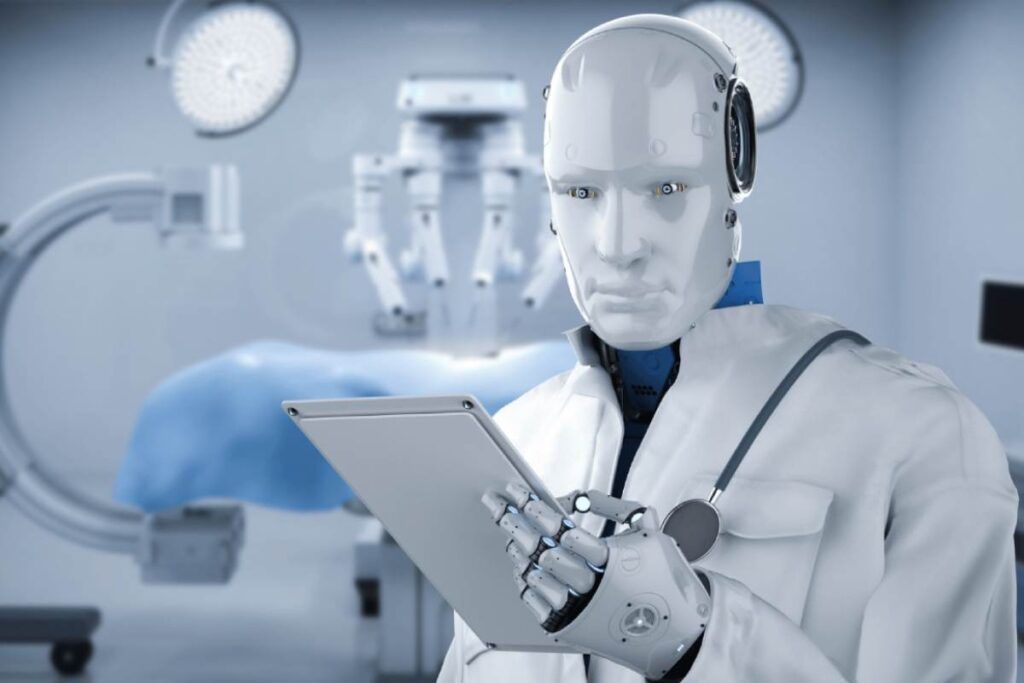

A study by Mass General Brigham found that AI chatbots, including ChatGPT and Gemini, gave incorrect medical diagnoses in over 80% of cases when provided with incomplete patient information. Even with full data, error rates remained high, raising concerns about the reliability of AI in medical diagnostics.[AI generated]

Why's our monitor labelling this an incident or hazard?

The AI systems involved are large language models used as medical diagnostic chatbots, which clearly qualify as AI systems. The study shows that their use leads to a high rate of diagnostic errors, which can cause harm to patients' health by misguiding treatment decisions. This constitutes direct harm to health (harm category a) caused by the AI systems' outputs. Therefore, this event qualifies as an AI Incident due to the realized harm from the AI systems' use in medical diagnosis.[AI generated]