The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

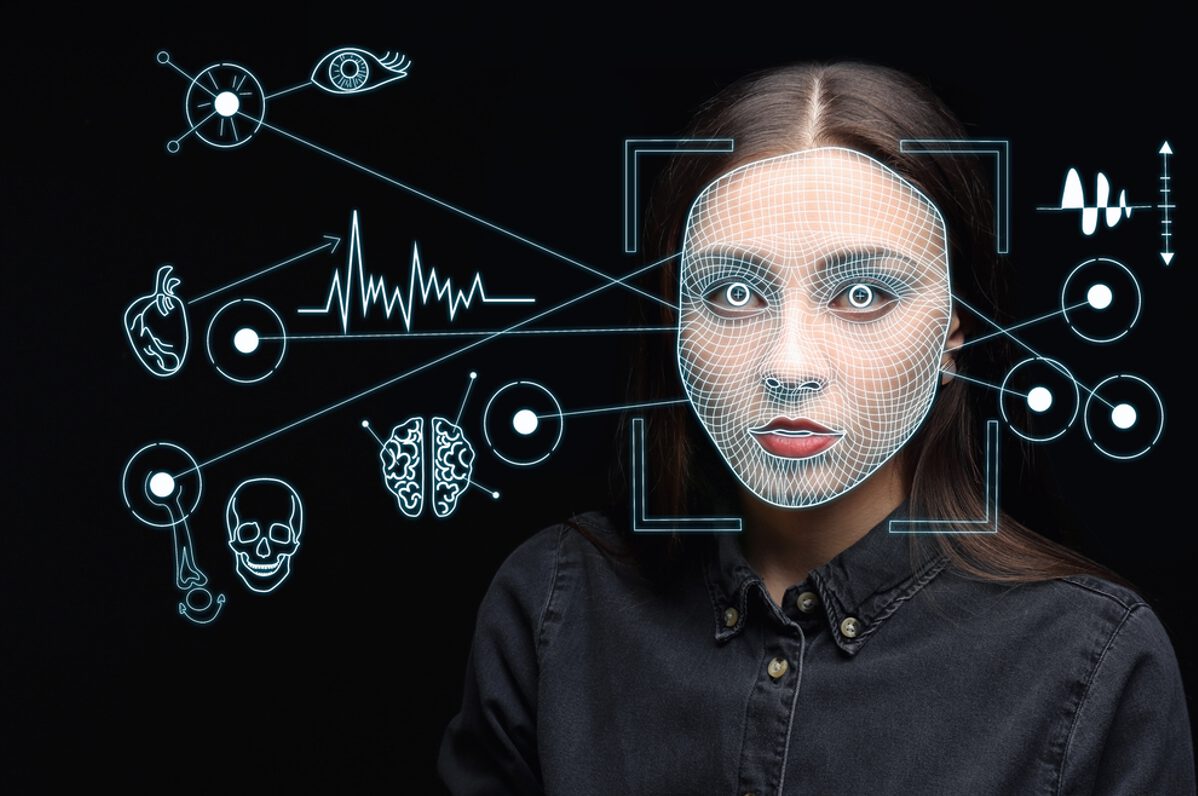

Kimberlee Williams, an Oklahoma resident, was wrongfully arrested and jailed for six months after facial recognition AI misidentified her as a suspect in Maryland bank fraud cases. Authorities relied on the AI match without proper verification, resulting in multiple felony charges and significant harm to Williams' rights and freedom.[AI generated]