The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

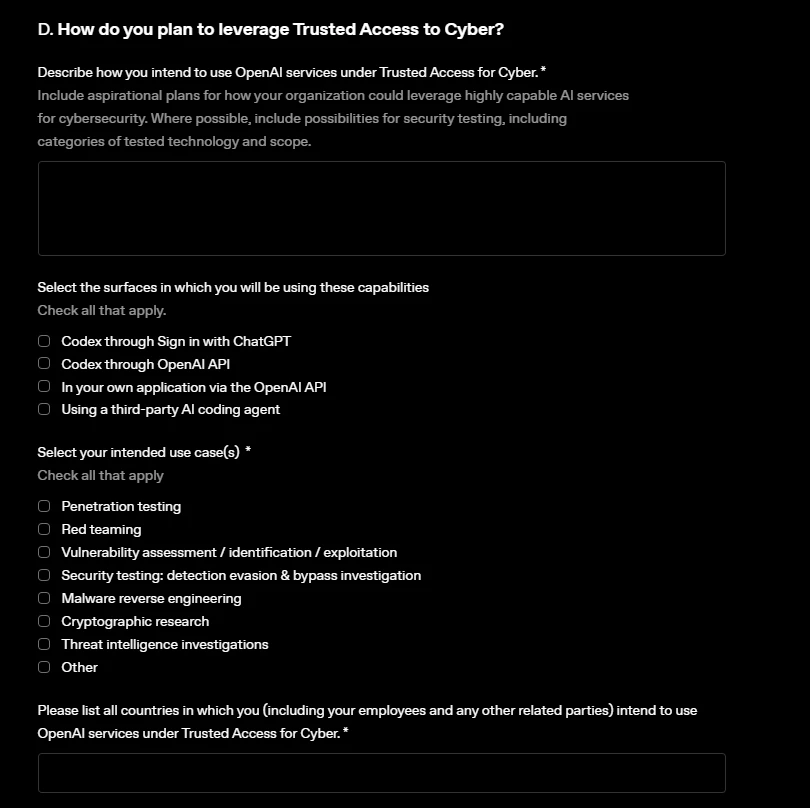

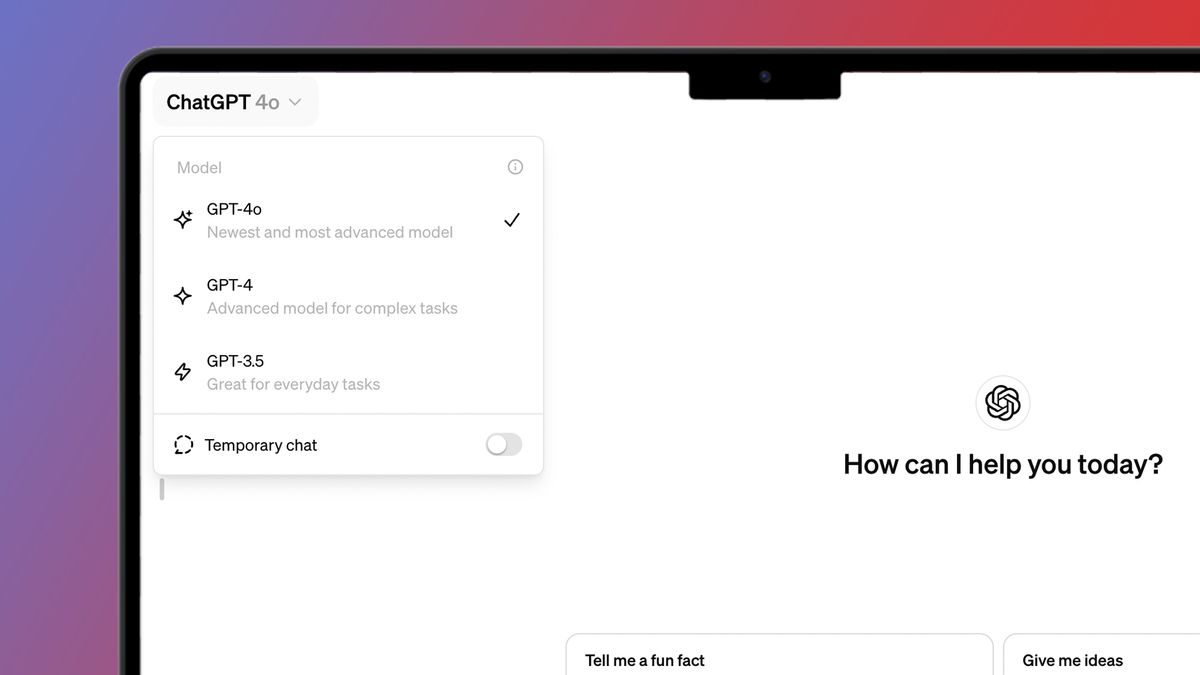

OpenAI and Anthropic have released advanced AI models (GPT-5.4-Cyber and Claude Mythos) for cybersecurity, capable of detecting software vulnerabilities. While intended for defensive use, their potential misuse has alarmed governments and financial institutions, prompting high-level meetings and warnings about risks to critical infrastructure. No actual harm has occurred yet.[AI generated]

)