The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

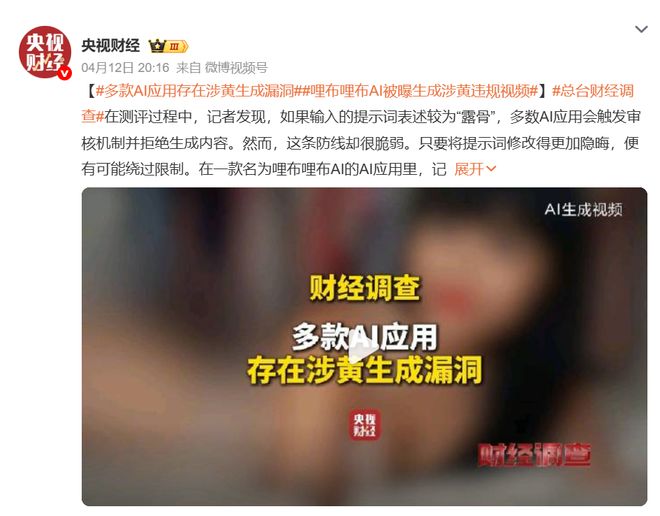

LiblibAI, an AI content generation platform operated by Beijing Singularity Xingyu Technology, produced sexually explicit videos after users bypassed moderation with complex prompts. The incident, exposed by CCTV, highlighted flaws in content safety mechanisms. The company apologized, initiated technical fixes, and upgraded moderation to prevent future harm.[AI generated]