The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

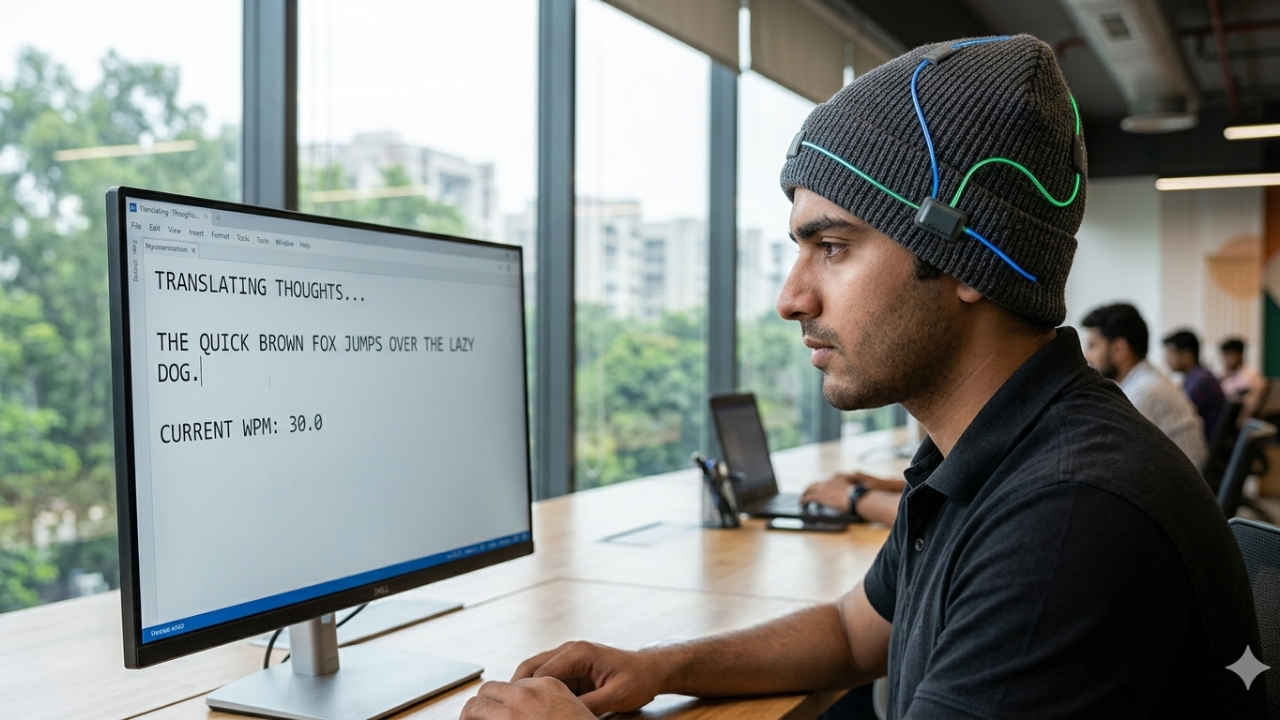

California-based startup Sabi is developing a wearable AI-powered cap that uses EEG sensors to convert brain signals into text, offering a non-invasive alternative to Neuralink. While no harm has occurred, the technology raises plausible future risks regarding privacy and misuse of sensitive neural data.[AI generated]