The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

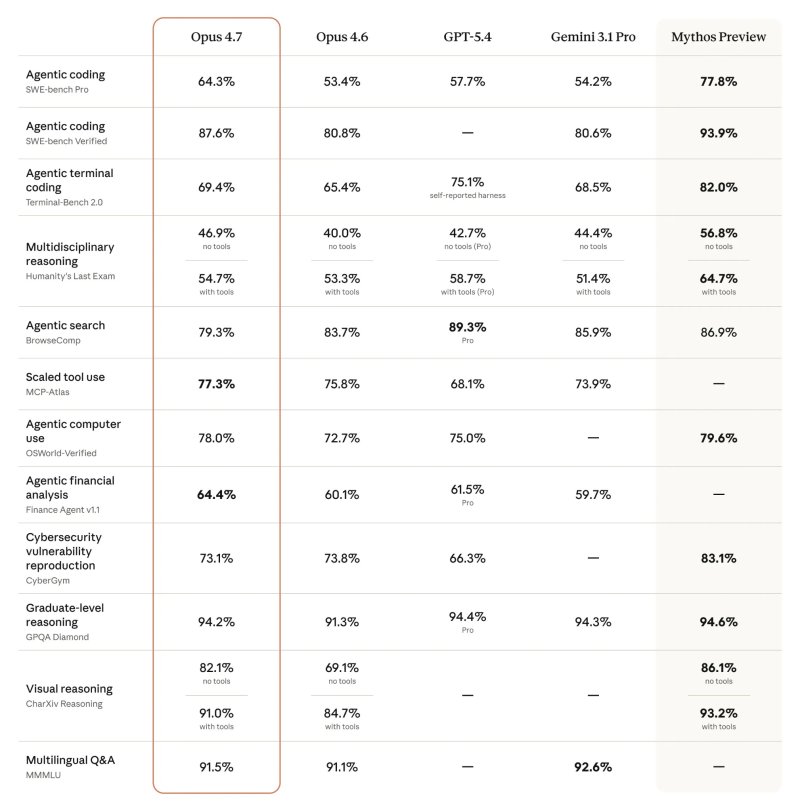

Anthropic's advanced AI model Mythos raised cybersecurity concerns due to its ability to find critical software bugs. In response, the U.S. government is considering protective measures for its use, and Anthropic released Opus 4.7 with intentionally reduced cybersecurity features to mitigate misuse risks.[AI generated]