The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

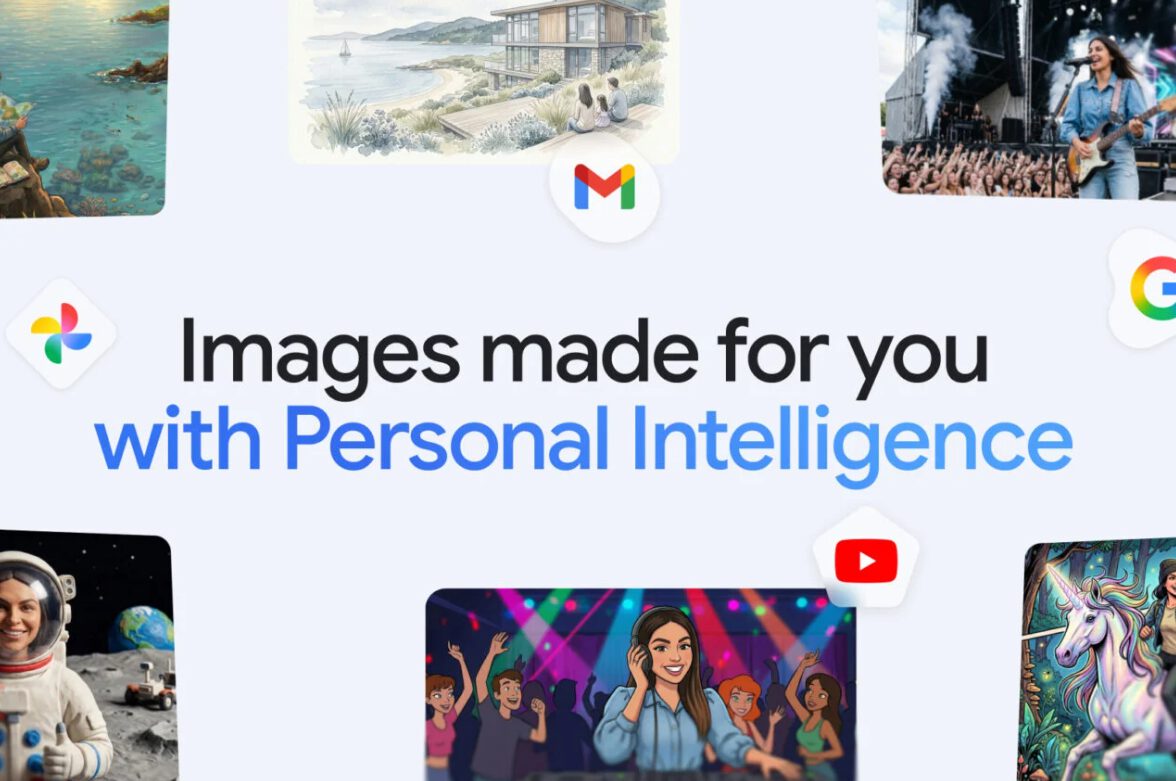

Google's Gemini AI now scans users' personal photos and emails to generate personalized content, raising significant privacy concerns. The opt-in feature accesses sensitive data from Google Photos and Gmail, prompting criticism over vague consent processes and potential rights violations. Privacy advocates and regulators are scrutinizing the update for possible misuse of personal information.[AI generated]