The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

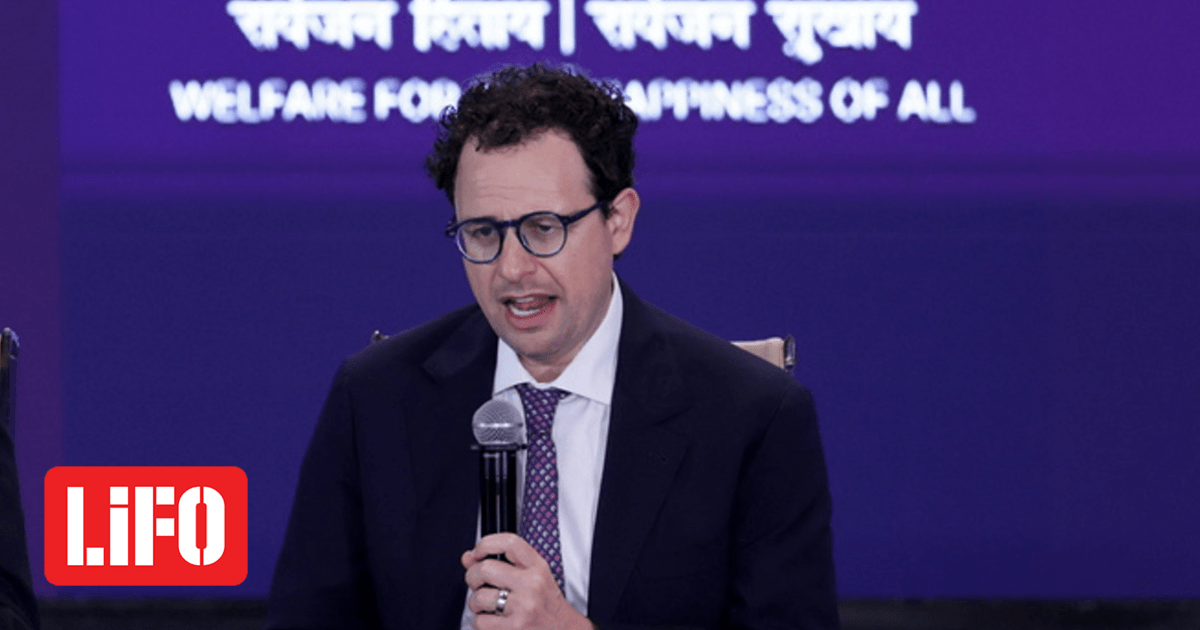

Anthropic's advanced AI model, Mythos, has sparked significant concern due to its powerful cybersecurity capabilities, which could be misused for large-scale cyberattacks. Despite Pentagon bans, US and UK intelligence agencies have accessed Mythos, highlighting risks of misuse and governance challenges, though no actual harm has yet occurred.[AI generated]