The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

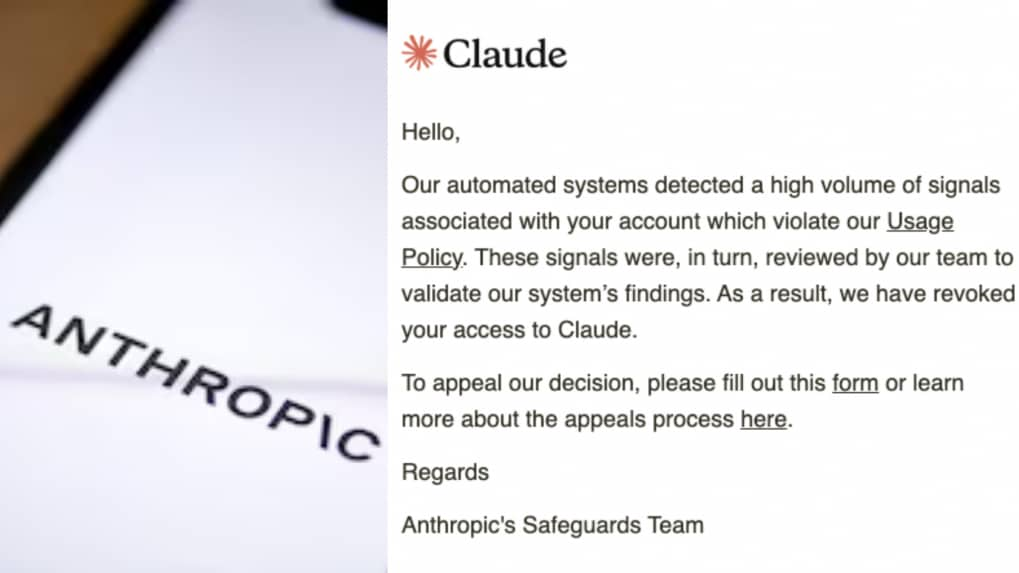

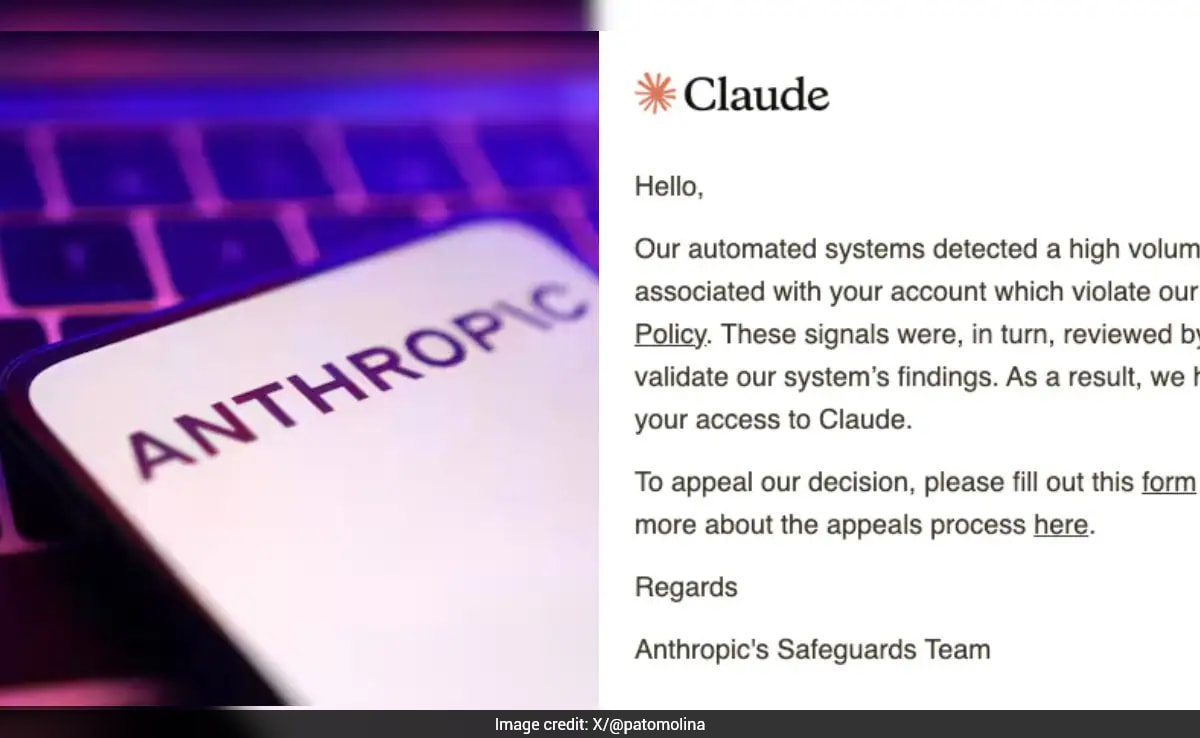

Anthropic's automated safeguards in its Claude AI system mistakenly suspended over 60 accounts of Argentina-based fintech firm Belo, disrupting operations and cutting employee access to key workflows and data. The abrupt action, lacking clear explanation or warning, highlighted risks of over-reliance on single AI providers.[AI generated]

)