The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

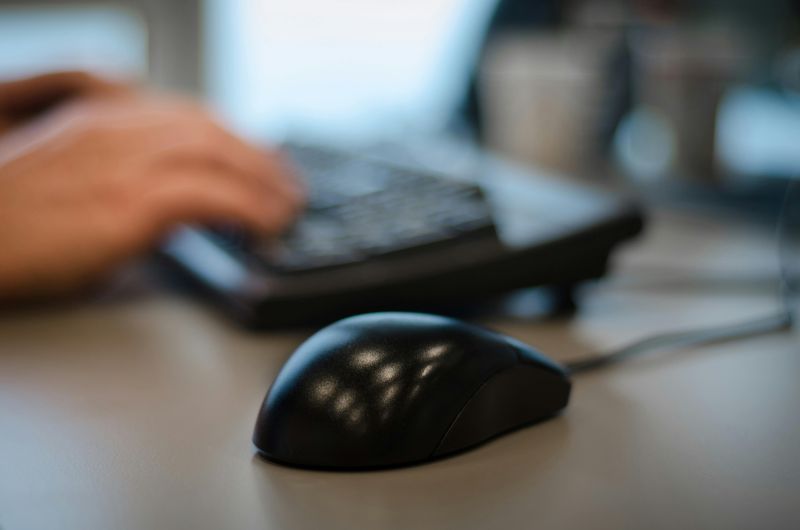

Meta implemented the Model Capability Initiative, an AI-driven software that monitors and records detailed employee computer activity in the US to train workplace automation models. The mandatory, pervasive surveillance has triggered employee protests and privacy concerns, with experts warning of labor rights violations and a dystopian work environment.[AI generated]