The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

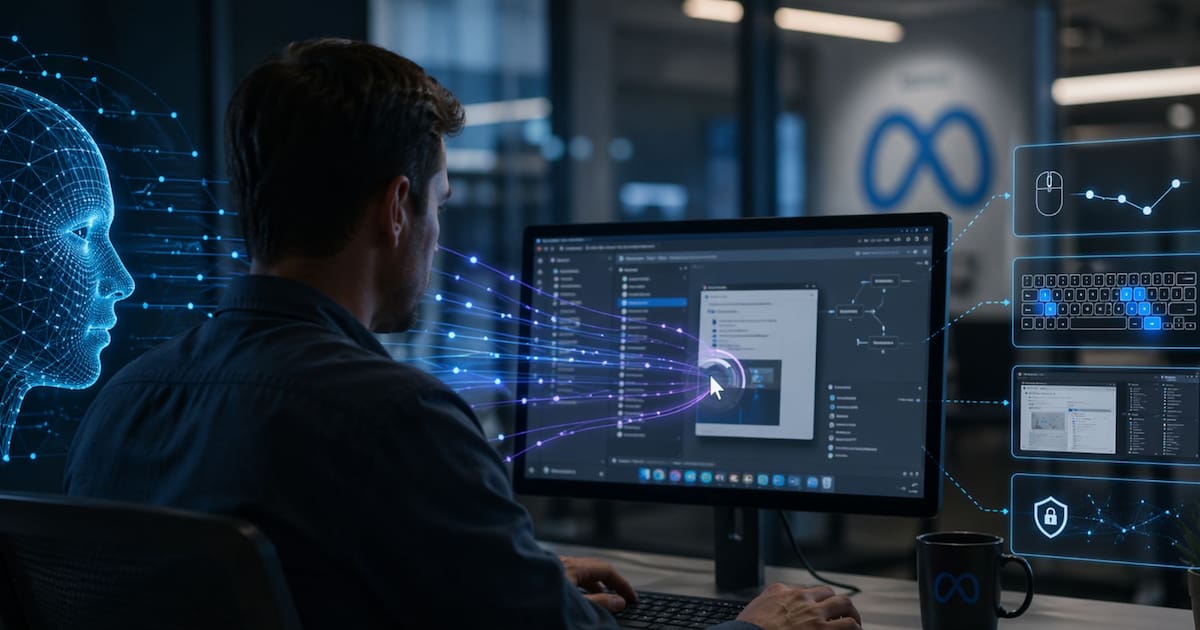

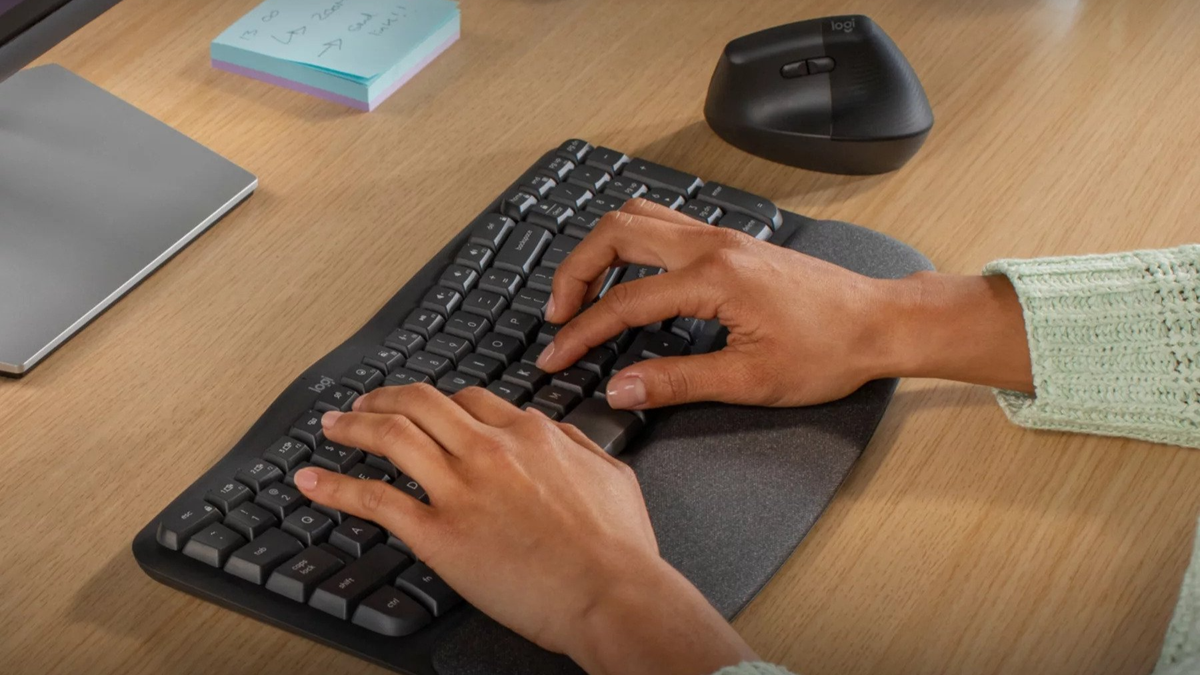

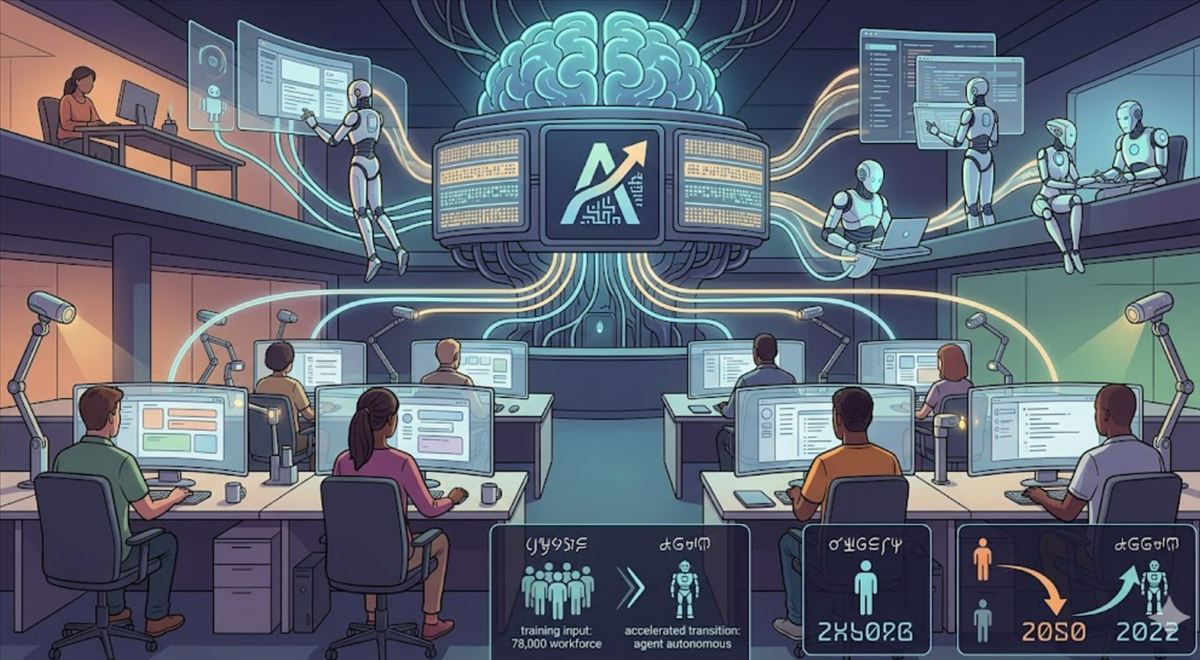

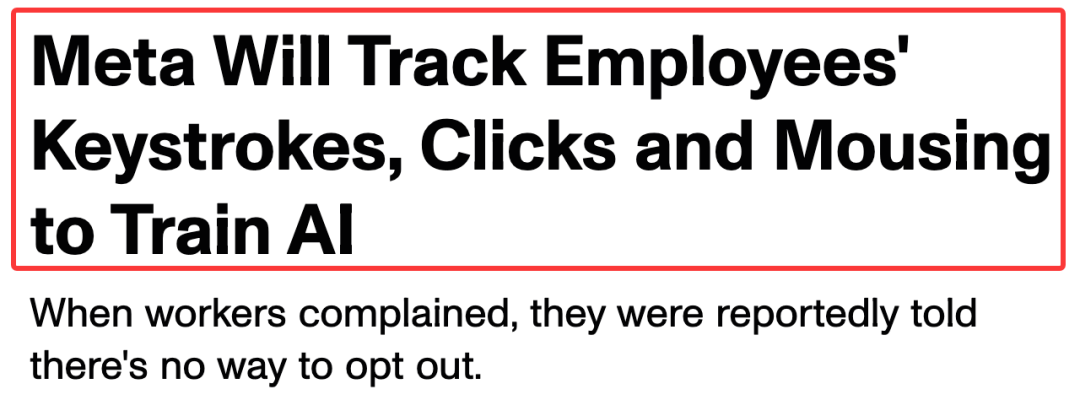

Meta is installing tracking software on U.S.-based employees' computers to log keystrokes, mouse movements, and screen content for AI training. The initiative, aimed at improving AI agents' ability to perform work tasks, raises concerns about employee privacy and potential labor rights violations.[AI generated]

)

_20240427194350_ogImage_43.jpg)