The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

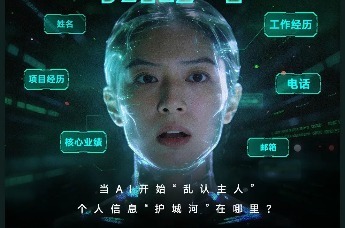

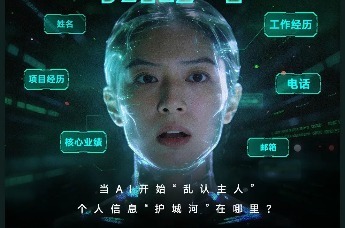

The Kimi large language model, developed by Moonshot AI, mistakenly disclosed a user's private resume—including name, phone, and work history—to another user during a routine task. The leaked data was verified as authentic, raising serious concerns about AI data isolation and privacy protection. Legal action is underway.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves an AI system (Kimi, a large language model) that, during normal use, disclosed another user's private and sensitive personal information without authorization. The harm is realized as personal data leakage and privacy violation, which is a breach of legal obligations protecting personal information and fundamental rights. The AI system's malfunction or engineering failure (e.g., data isolation failure, session contamination) directly caused this harm. The incident is not hypothetical or potential but has already occurred and caused harm, so it is an AI Incident rather than a hazard or complementary information.[AI generated]