The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

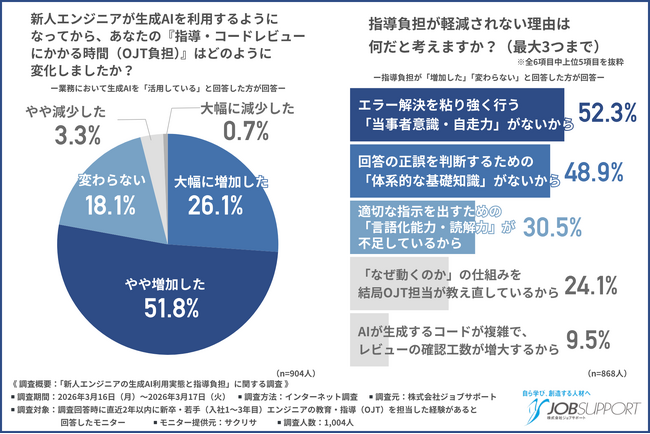

A survey of 322 Japanese IT engineers revealed that the widespread use of AI-generated code has led to a significant increase in reviewer workload, with 78.6% experiencing bugs or defects caused by AI code. Nearly 90% reported increased review burdens, often requiring over three extra hours per week to maintain software quality.[AI generated]