The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

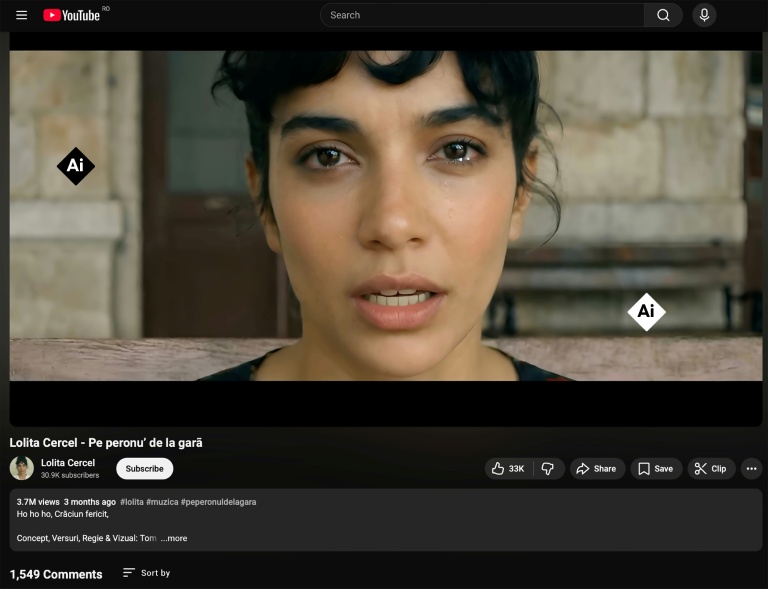

The AI-generated singer Lolita Cercel has become a sensation in Romania, but has drawn criticism for perpetuating racist stereotypes against the Roma minority and causing economic and reputational harm to real Roma musicians. The incident highlights concerns over AI's role in reinforcing discrimination and replacing human artists.[AI generated]