The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

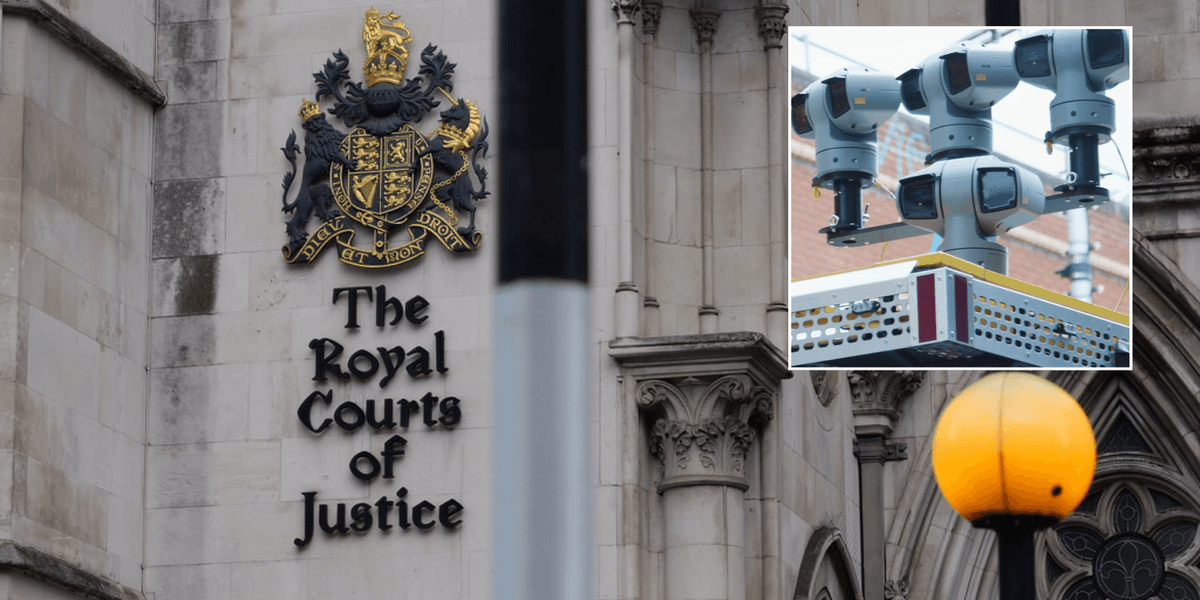

The UK High Court upheld the Metropolitan Police's use of live AI facial recognition technology, despite legal challenges citing misidentification, wrongful detention, and potential racial bias. The ruling allows nationwide rollout, raising ongoing concerns about privacy violations and discriminatory impacts on individuals in London.[AI generated]