The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

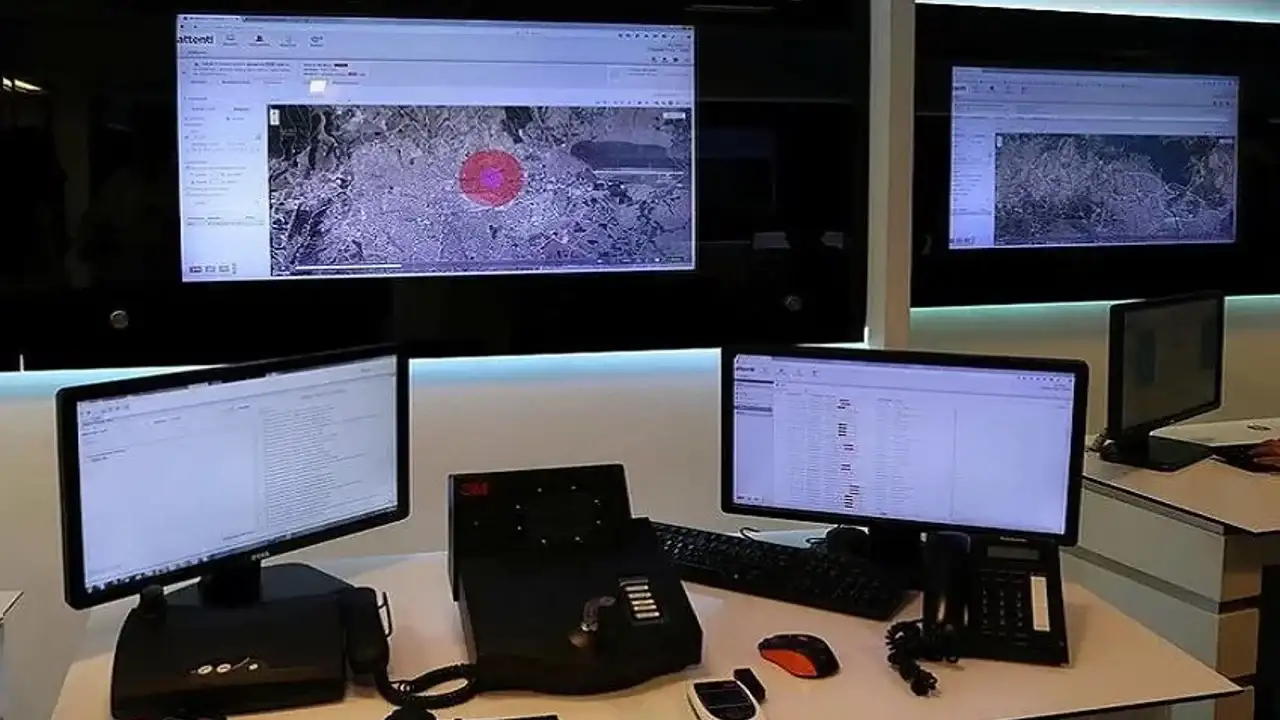

Turkey's Justice Ministry is preparing to implement the Biometric Signature and Tracking System (BİOSİS), using AI-driven biometric verification and GPS tracking to monitor 450,000 individuals under judicial supervision via smartphones. While aiming to increase efficiency, the system raises concerns about potential privacy violations and rights infringements.[AI generated]