The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

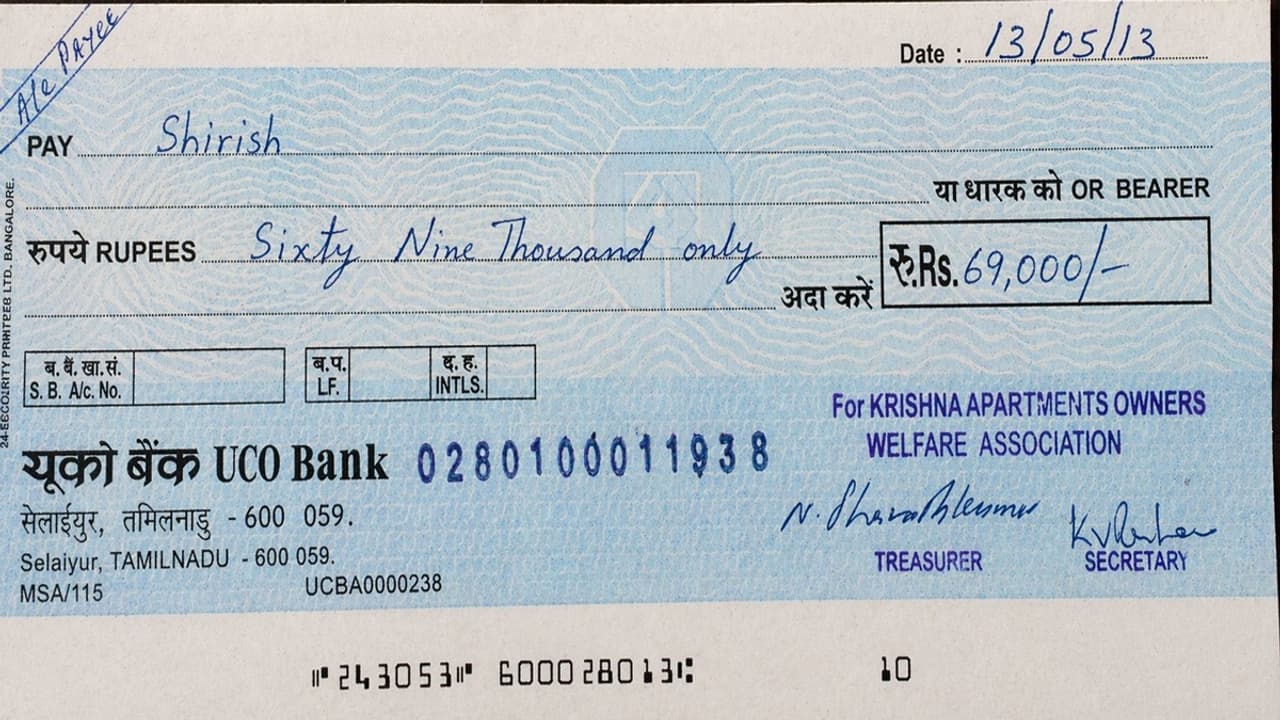

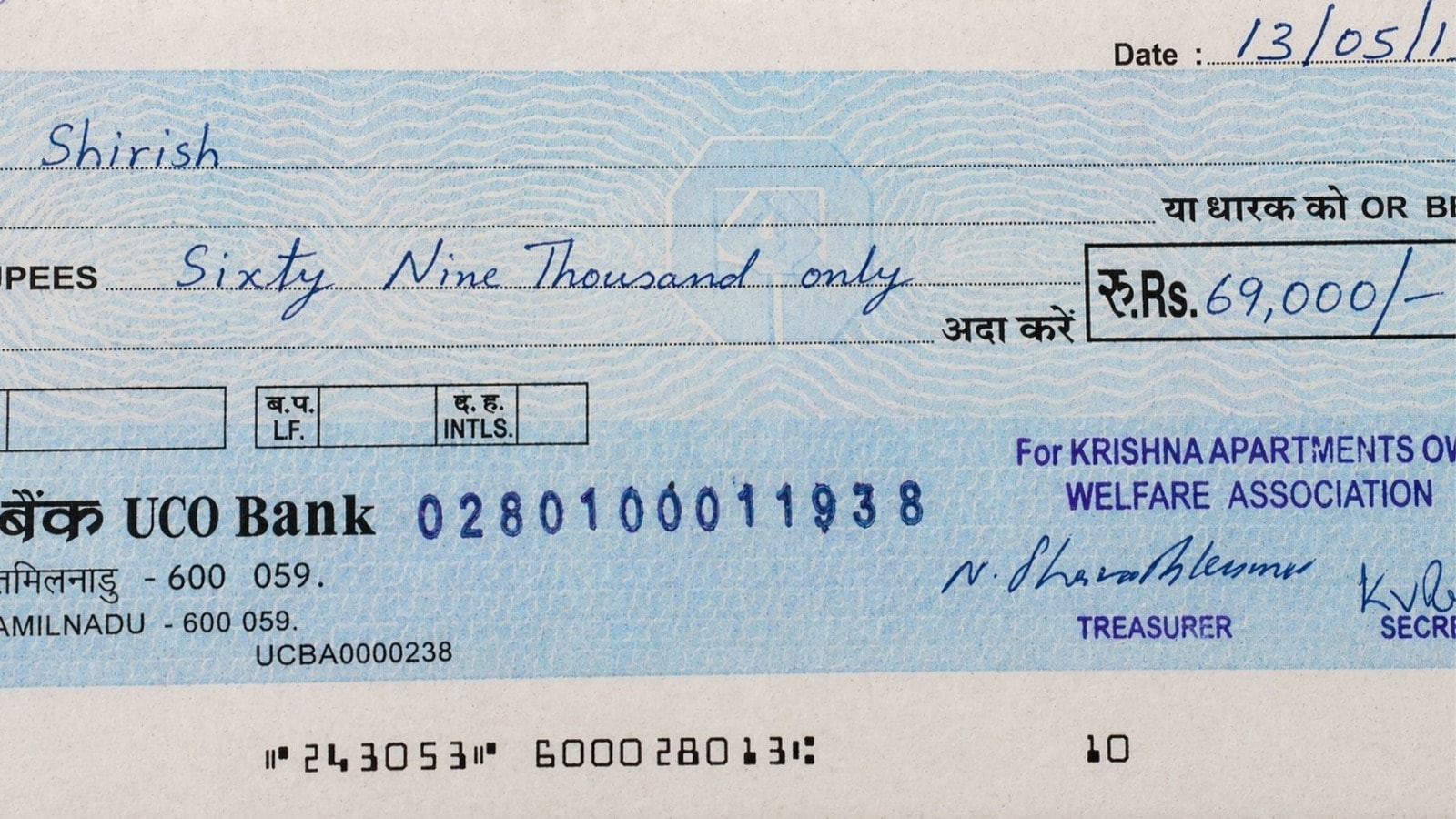

A viral social media post showed a hyper-realistic UCO Bank cheque created using ChatGPT Image 2.0, raising widespread alarm about the potential for AI-generated images to facilitate financial fraud. While no actual harm occurred, the incident highlights growing risks of AI misuse in creating convincing forged documents.[AI generated]