The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

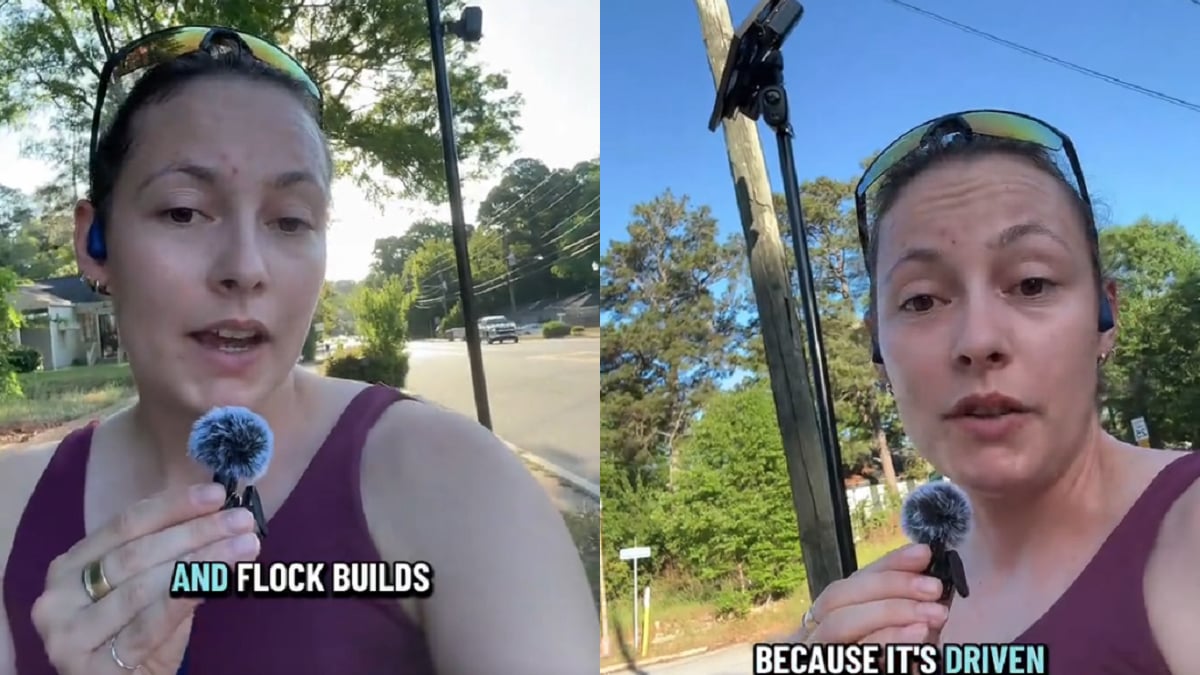

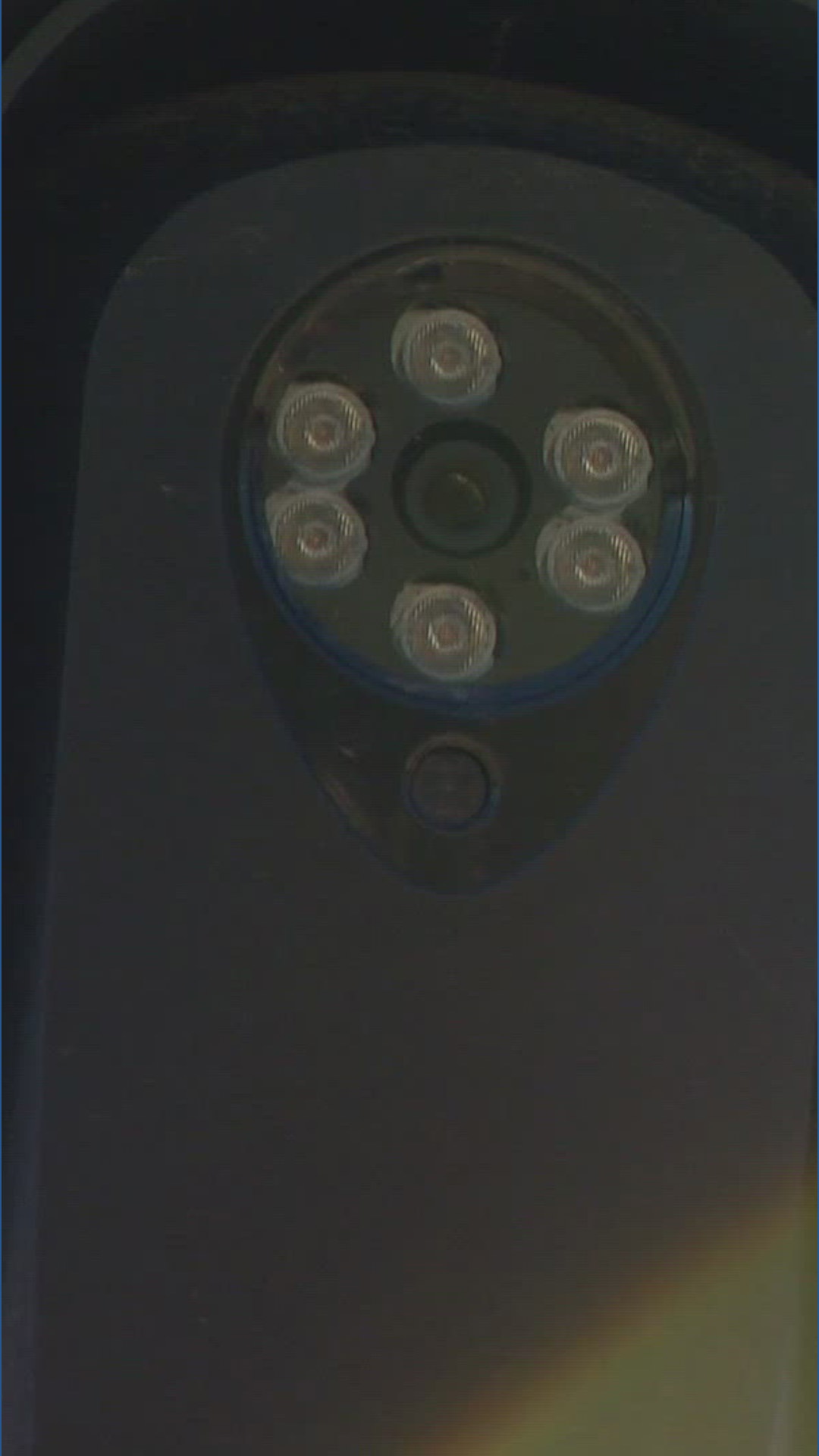

Florida law enforcement used AI-powered Flock license plate readers to track individuals linked to political protests, raising concerns over privacy and rights violations. In Georgia, residents report privacy harms and misuse, including stalking and targeting immigrants, highlighting the risks of mass surveillance enabled by these AI systems.[AI generated]