The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

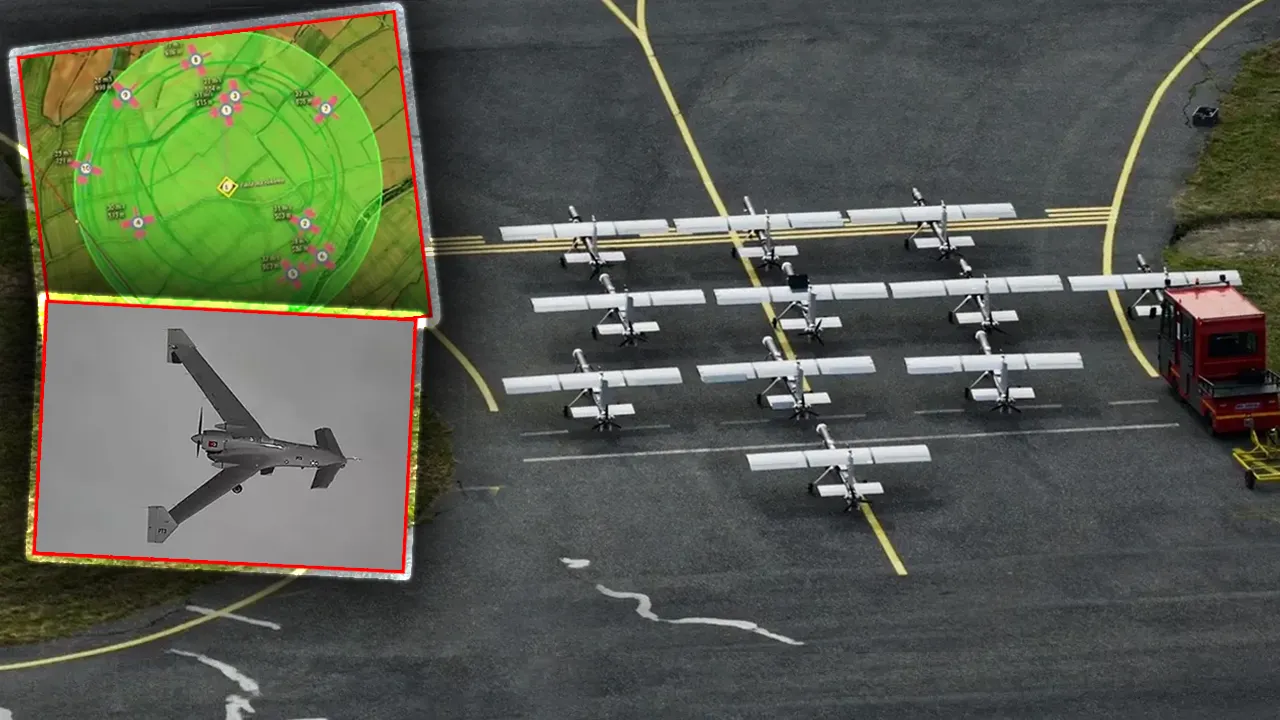

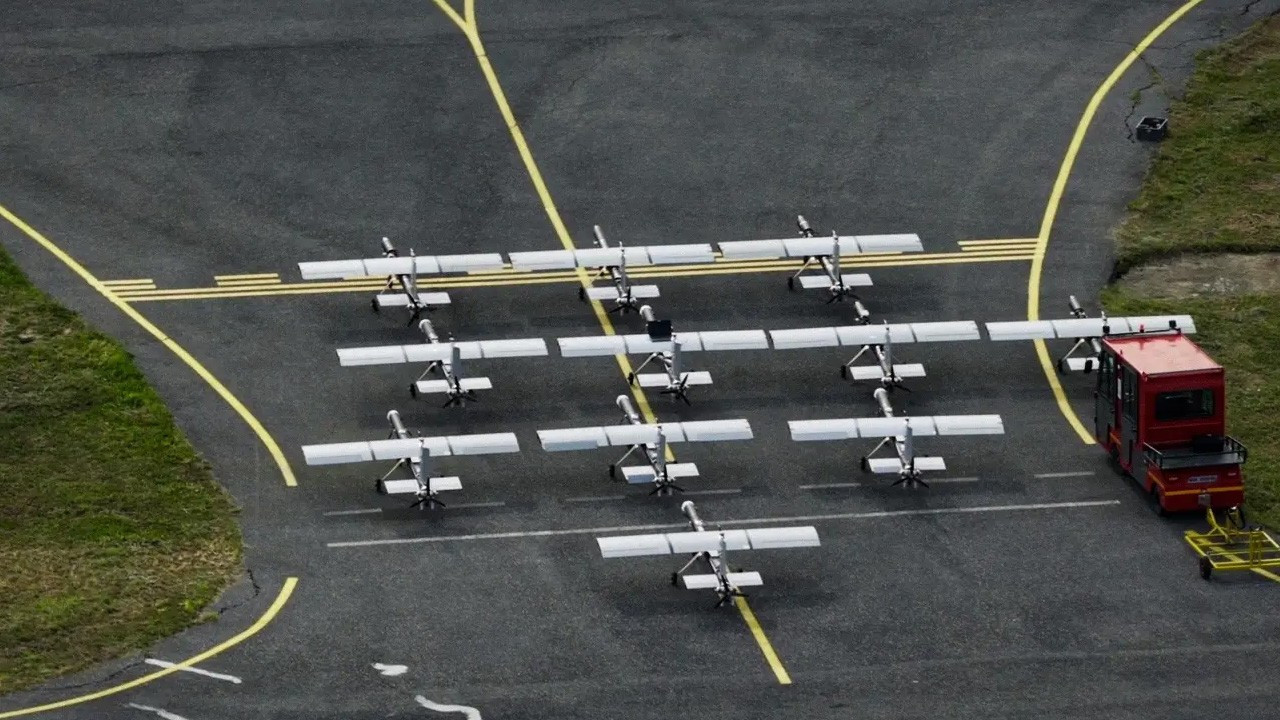

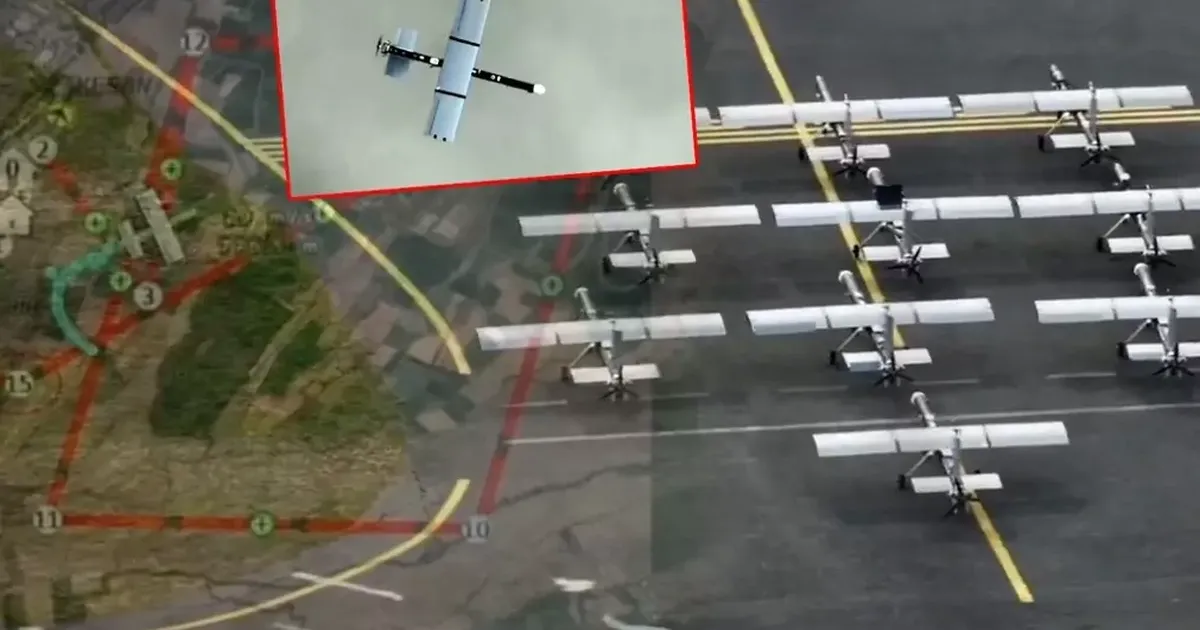

Baykar showcased its new AI-powered kamikaze drones, K2 and Sivrisinek, in Keşan, Turkey. The demonstration highlighted autonomous swarm navigation, target detection, and attack capabilities. These AI-enabled weapon systems, set to debut at SAHA 2026, pose potential risks of harm if deployed in conflict scenarios.[AI generated]