The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

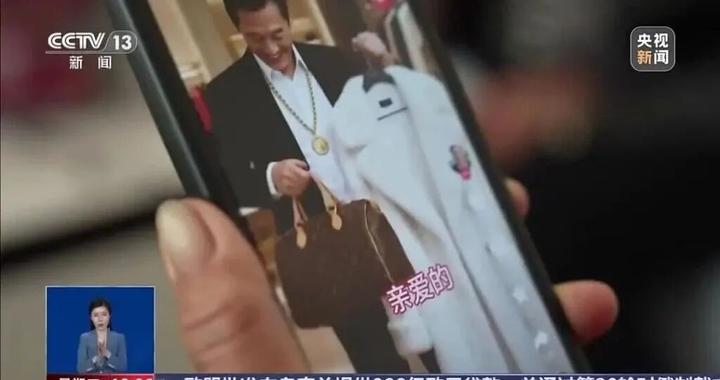

AI-generated videos on Chinese platforms have targeted elderly users with emotionally manipulative content, leading to financial scams and psychological harm. Separately, an AI-created fake video of a building collapse caused widespread panic and misinformation. Both incidents highlight the misuse of AI for deception and harm to vulnerable groups and communities.[AI generated]