The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

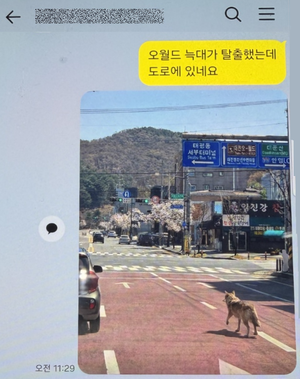

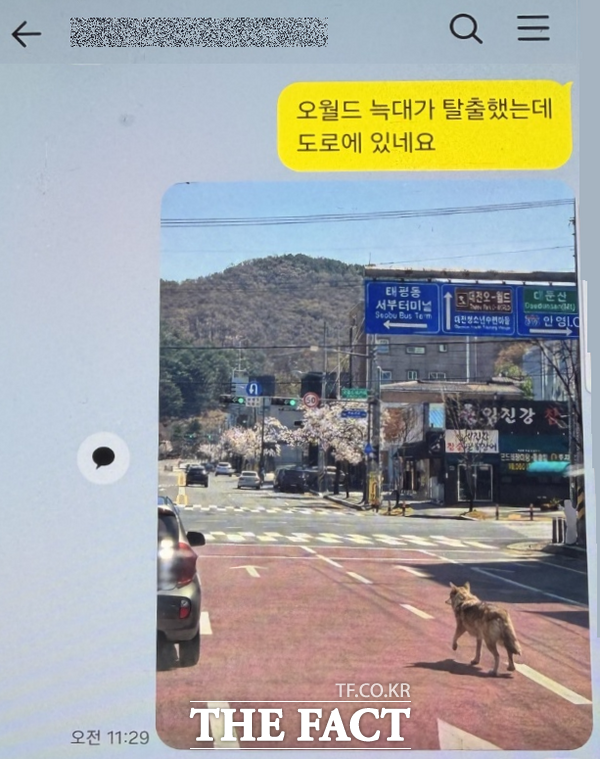

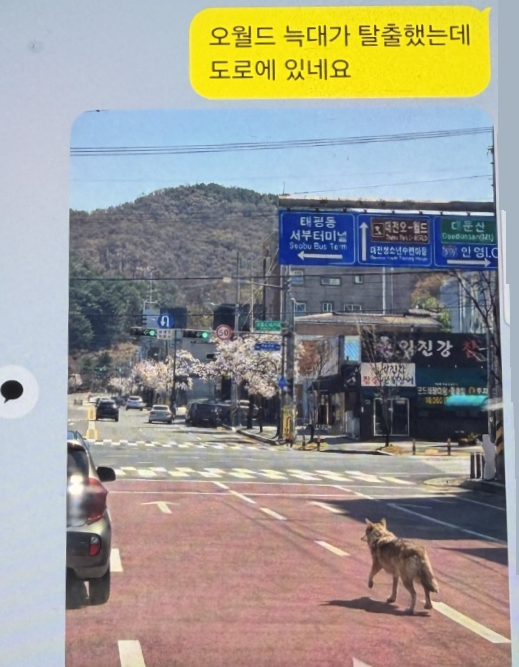

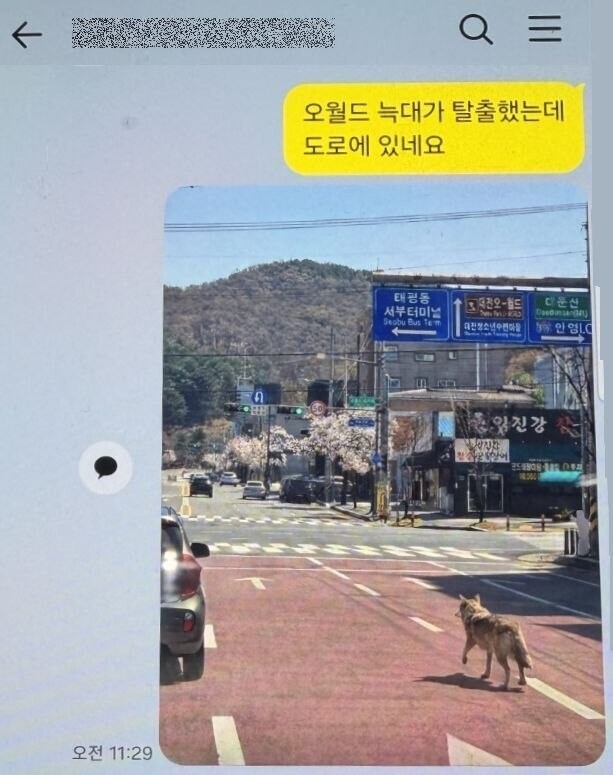

A man in Daejeon, South Korea, used AI to create and distribute a fake photo of an escaped zoo wolf, misleading authorities and the public. The image caused emergency services to alter search operations, issue disaster alerts, and delayed the wolf's capture, highlighting the real-world harm from AI-generated misinformation.[AI generated]