The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

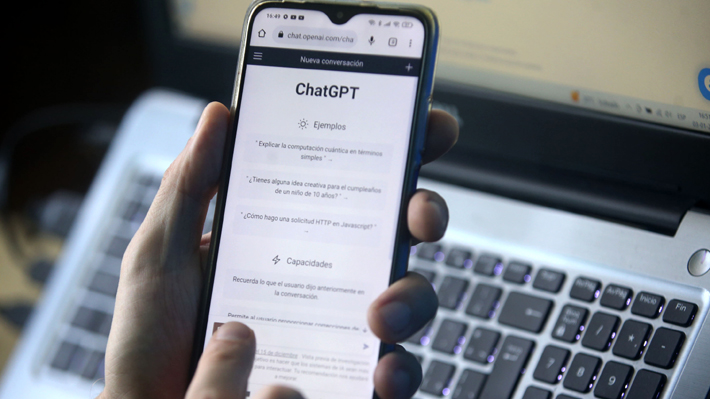

A Spanish judge was fined €1,000 by the General Council of the Judiciary for using ChatGPT to draft a judicial sentence, breaching confidentiality and judicial protocols. The incident highlights legal and ethical concerns over AI use in sensitive judicial processes, as the judge failed to protect case data and inform colleagues.[AI generated]