The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

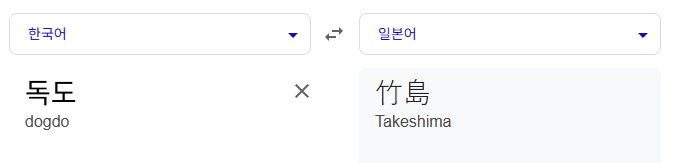

Google Translate, an AI-powered translation service, has been criticized for mistranslating 'Dokdo' as 'Takeshima' (the Japanese name for the disputed territory) and 'Kimchi' as 'Paochai' (a different Chinese dish). These errors have sparked public outcry in South Korea over cultural misrepresentation and misinformation.[AI generated]