The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

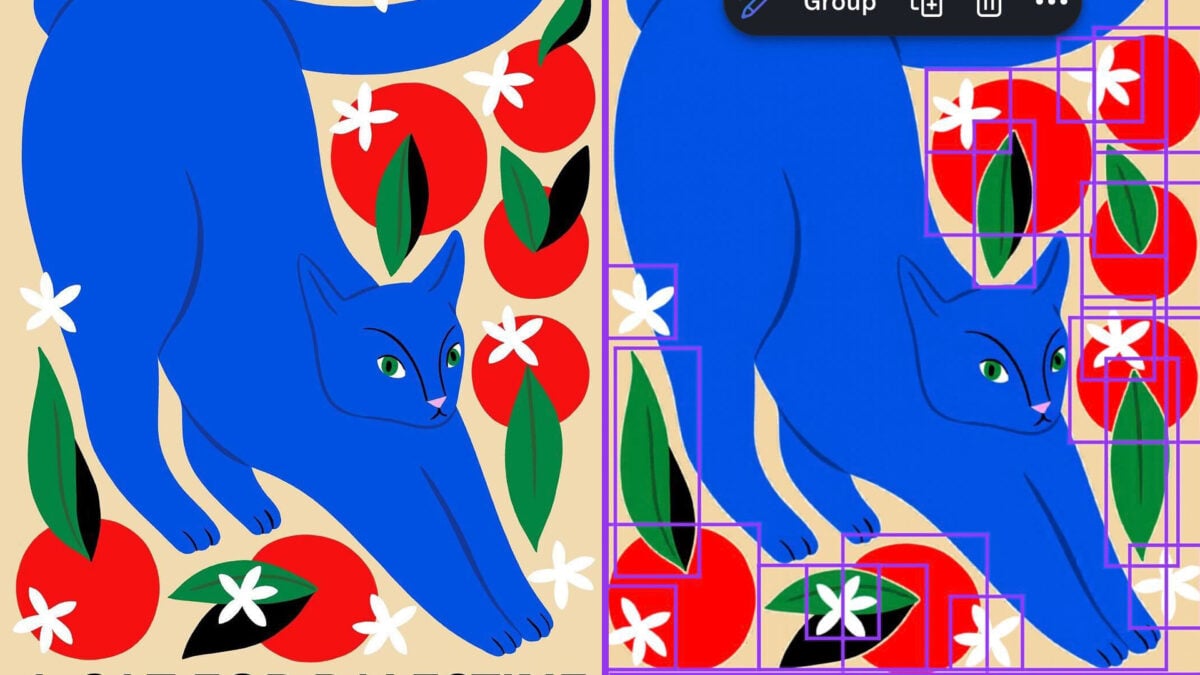

Canva's AI-powered Magic Layers tool was found to automatically replace the word 'Palestine' with 'Ukraine' in user-generated designs, sparking accusations of censorship and bias. The issue, which did not affect related terms like 'Gaza,' caused distress among users. Canva has apologized and implemented fixes to prevent recurrence.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves an AI system (Magic Layers) whose malfunction caused biased and inappropriate content alteration, directly impacting users and communities by misrepresenting politically sensitive terms. This constitutes harm to communities and a violation of rights related to accurate information and representation. The harm is realized, not just potential, as users experienced the biased output. Therefore, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]