The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

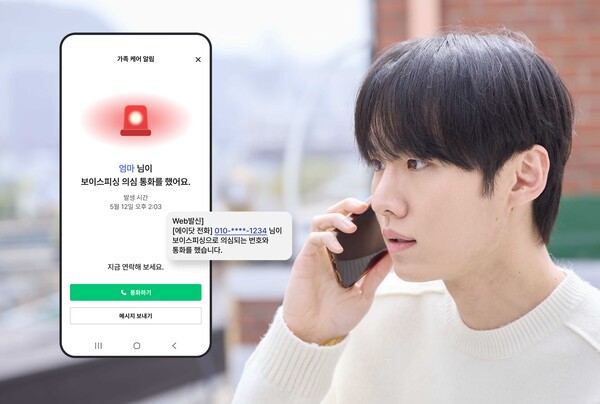

SK Telecom has enhanced its AI-powered call app, A.Dot, with a 'Family Care' feature that detects suspected voice phishing calls and immediately alerts up to 10 registered guardians via SMS or push notifications. This system aims to prevent financial and psychological harm from scams by enabling rapid family intervention in South Korea.[AI generated]