The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

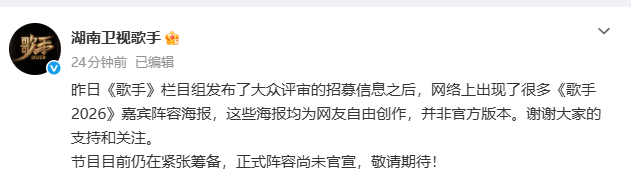

AI-generated posters falsely announcing the lineup for the Chinese music show 'Singer 2026' circulated online, misleading fans and even artists. The realistic visuals led to widespread confusion and reputational harm, prompting official denials and highlighting the risks of AI-driven misinformation in entertainment.[AI generated]