The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

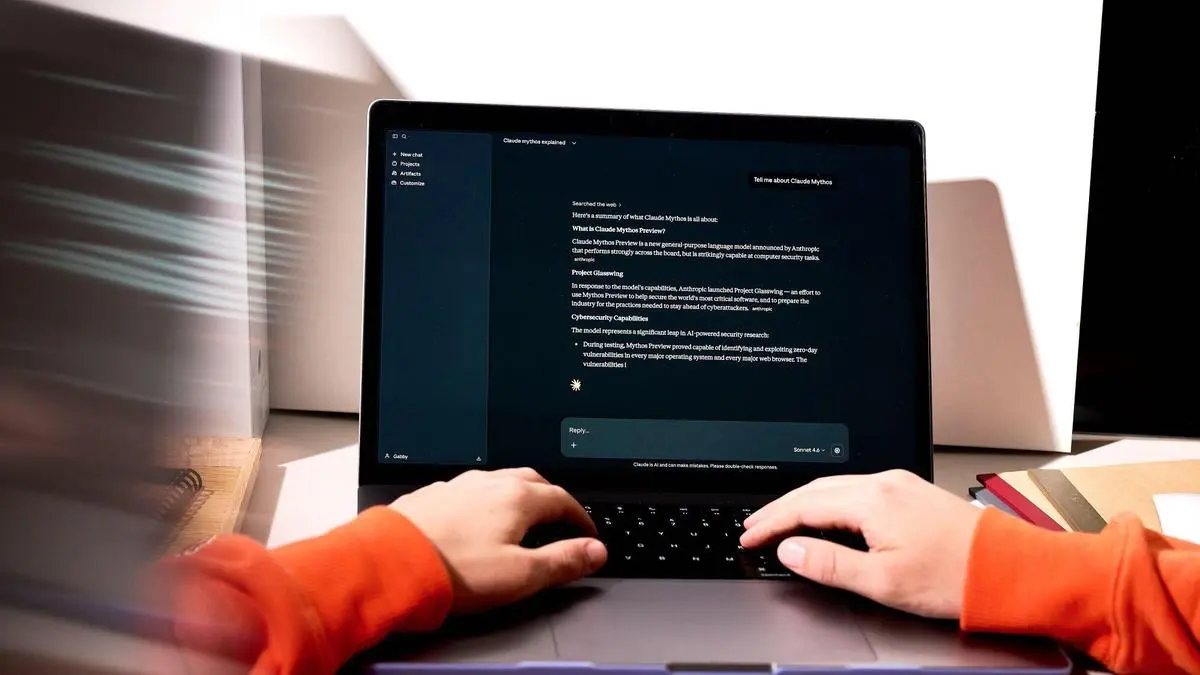

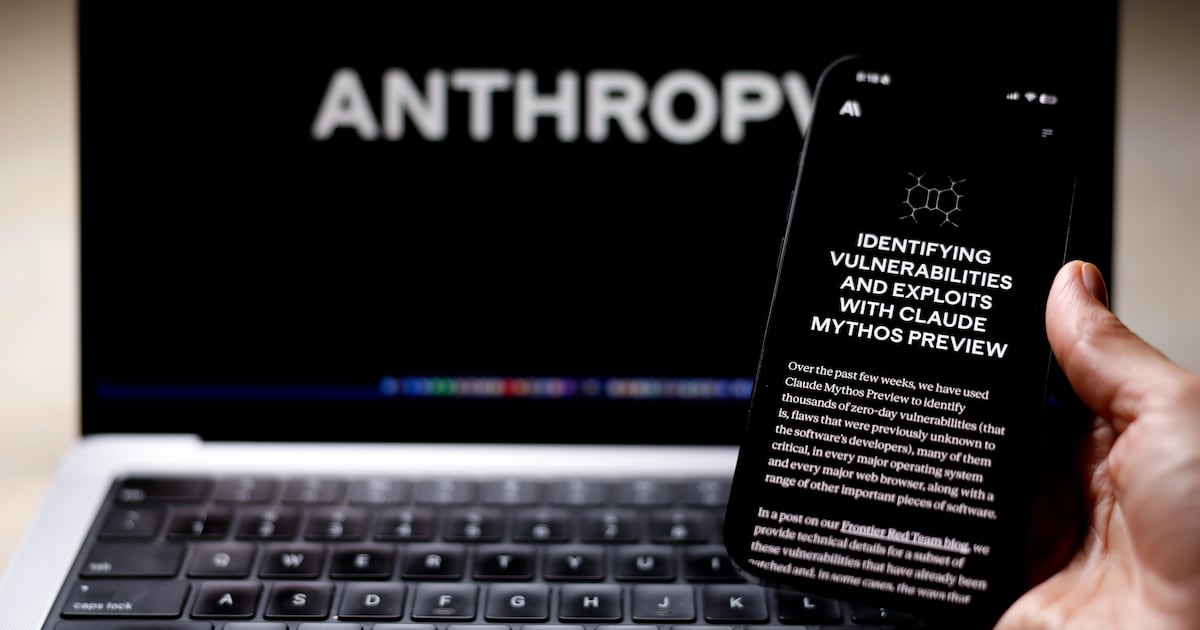

Anthropic's Mythos AI model, capable of autonomously finding software vulnerabilities and enabling cyberattacks, faces opposition from the White House over plans to expand access. US officials cite concerns about misuse by hackers or foreign governments and potential impact on government operations, prompting restricted release to select organizations.[AI generated]