The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

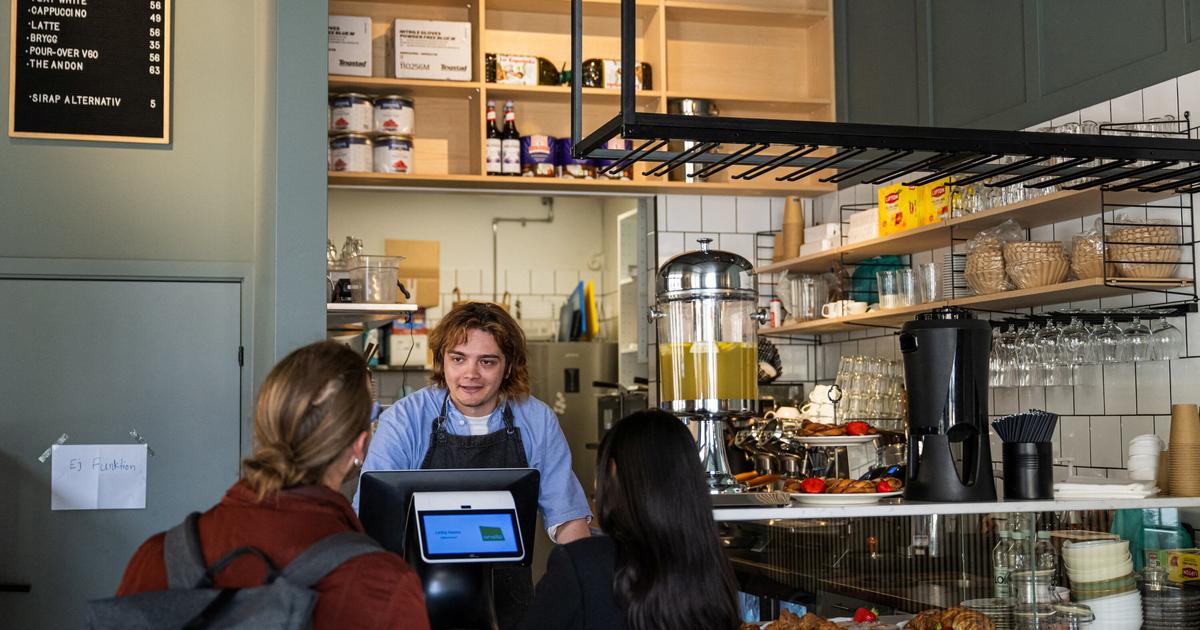

A café in Stockholm is managed entirely by an AI chatbot named Mona, responsible for hiring, supply orders, and daily operations. While the experiment highlights AI's potential in workplace management, it has led to operational inefficiencies and raised concerns about labor rights, employee well-being, and ethical risks, though no direct harm has yet occurred.[AI generated]