The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

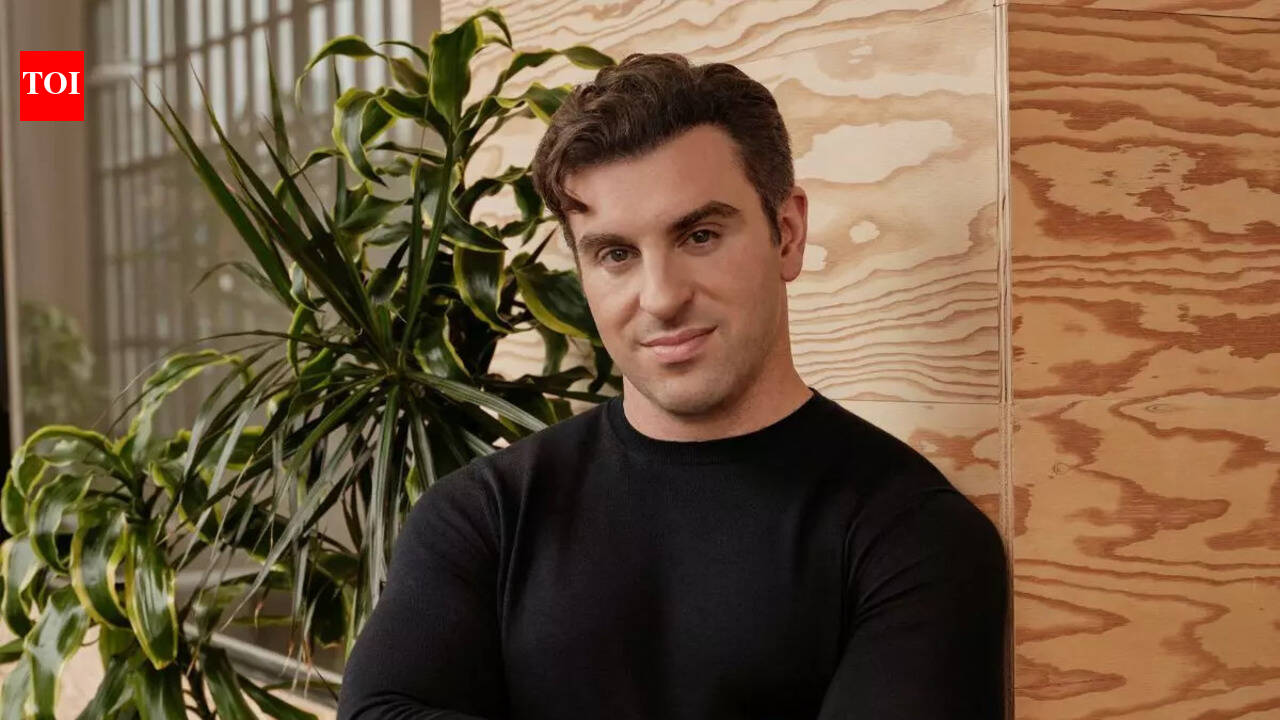

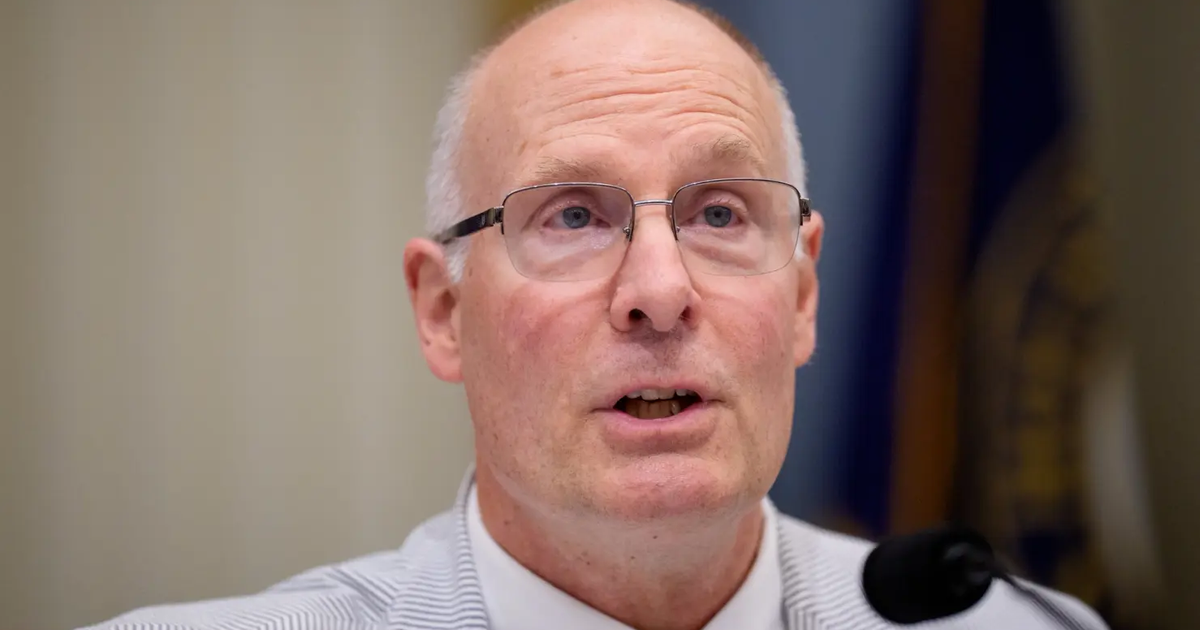

US House committees are investigating Airbnb and Anysphere for using Chinese-developed AI models, citing national security concerns over potential data exposure, censorship, and hidden vulnerabilities. Lawmakers have requested information and briefings from both companies to assess risks associated with Chinese AI technology in American businesses.[AI generated]