The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

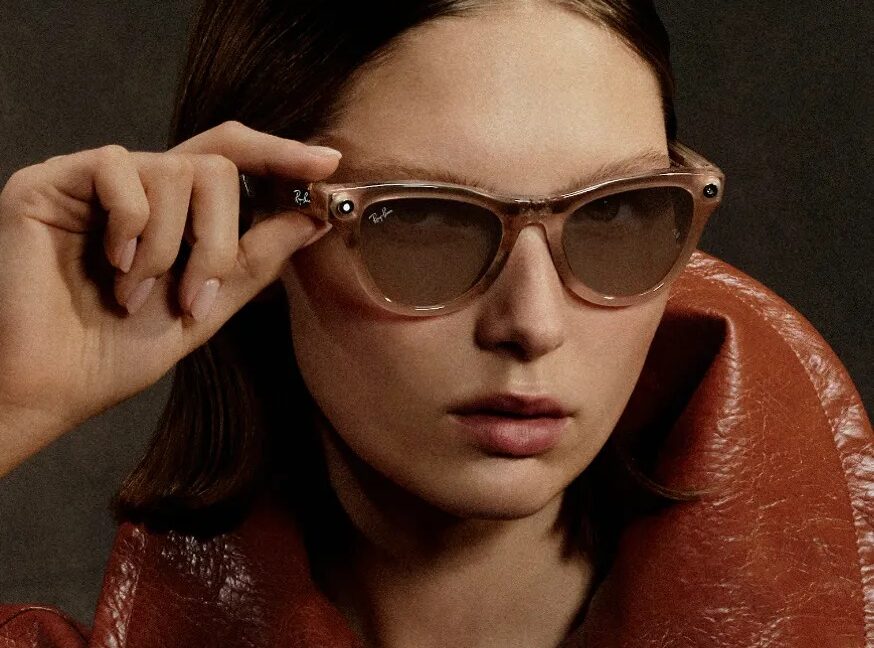

Meta terminated its contract with Kenyan firm Sama after over 1,100 workers, who trained AI systems using footage from Ray-Ban smart glasses, reported exposure to graphic and private content. The layoffs followed whistleblowing about privacy violations and poor labor conditions, raising concerns over AI training practices and worker well-being.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves AI systems (Meta's smart glasses and associated AI training processes). The development and use of these AI systems required human review of sensitive personal data, which led to privacy harms and labor rights violations. The firing of workers after they spoke out suggests potential retaliation, further implicating labor rights issues. Regulatory investigations confirm the recognition of these harms. Therefore, this event meets the definition of an AI Incident due to direct and indirect harms caused by the AI system's use and associated labor practices.[AI generated]

)