The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

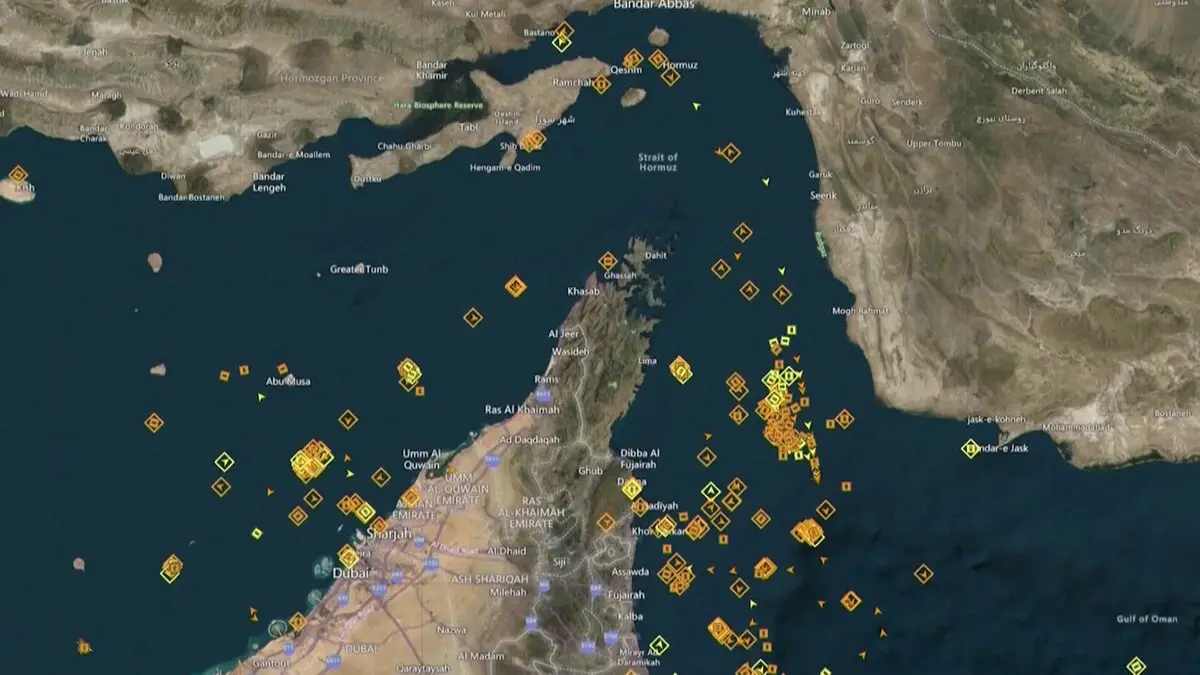

The US Navy has contracted Domino Data Lab for nearly $100 million to develop AI systems that rapidly detect underwater mines in the Strait of Hormuz. The AI integrates multi-sensor data, enabling faster and more accurate mine identification, aiming to enhance maritime security and protect global trade routes.[AI generated]