The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

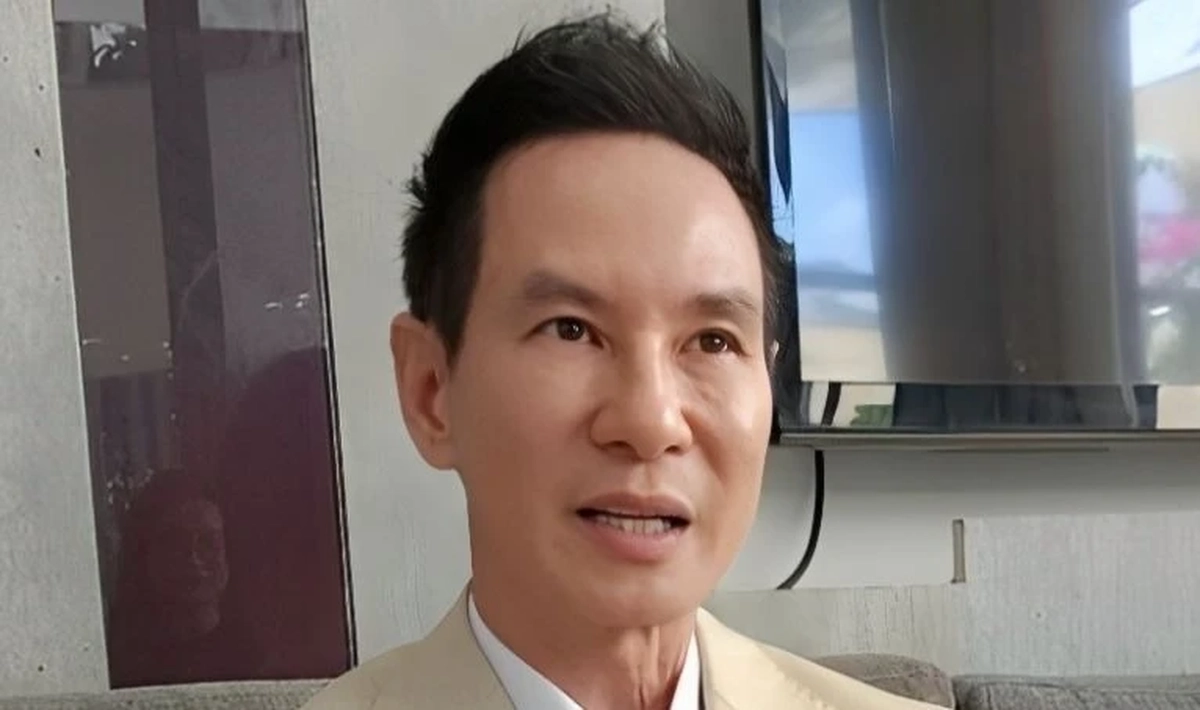

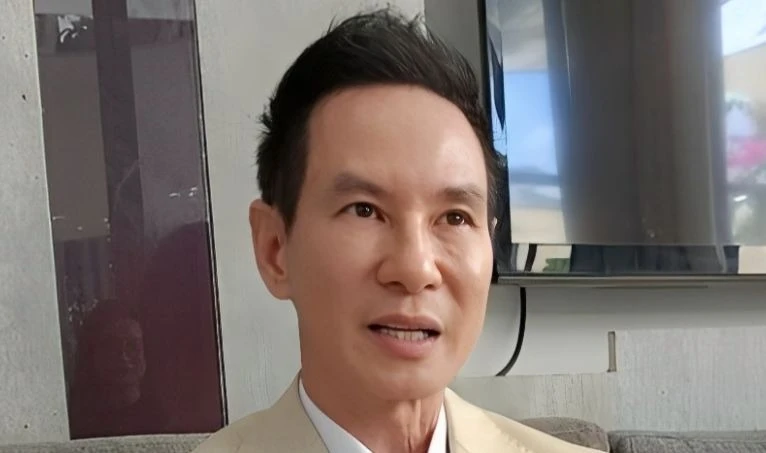

Vietnamese director Lý Hải and his wife warned about AI-generated fake videos and audio impersonating them to promote unverified products and scams. The sophisticated deepfakes deceive viewers, especially the elderly, leading to financial loss and reputational harm. Authorities have intervened in some cases, highlighting the growing misuse of AI for fraud.[AI generated]