The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

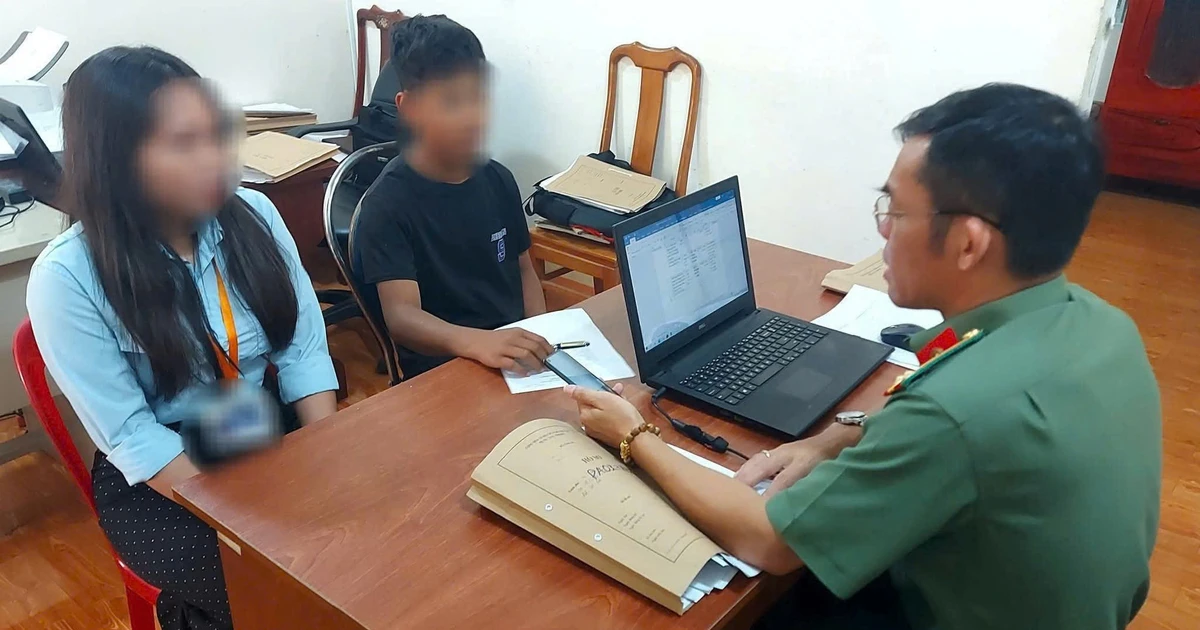

In Đồng Nai, Vietnam, a male student used AI software to create fake sensitive images of a female classmate, which were then spread on social media due to personal conflict. The incident caused psychological and reputational harm, prompting police intervention and highlighting risks of AI misuse among students.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves the use of AI technology to generate fake images, which were then shared and caused harm to the victim's psychological well-being and reputation. This fits the definition of an AI Incident because the AI system's use directly led to harm to a person and harm to the community through misinformation and reputational damage. The involvement of law enforcement and educational responses further confirms the recognition of harm caused by AI misuse.[AI generated]